Has the thorny, decades-old problem of compositionality finally be solved?

In an essay yesterday called, Now I Really Won That AI Bet the well-known blogger Scott Alexander, more or less made that argument yesterday, proclaiming victory on old bet (with someone else, not me) and implying that this meant that AI has “master[ed] image compositionality”.

Compositionality is, roughly speaking, about understanding and/or constructing wholes in terms of their parts. The crux of Alexander’s crowing is that a few years ago he predicted that generative AI would succeed on a certain set of prompts by June 2025, and, sure enough, now they have.

To be sure, Alexander is on strong grounds to say he won the specifics of his bet, and correct that the field has made some progress. But at the same time he has accidentally slipped into very weak form of argument, often known as the motte and bailey, in which someone proves a mild assertion (the motte) and erroneously asserts victory on a larger, more controversial question (the bailey).

§

The motte here is the bet. I agree that Alexander won his bet. He said that Generative AI would make progress on a certain specific set of prompts, and it has.

Here’s an example of his on which GPT4o now succeeds.

The bailey is that victory on this particular bet (with someone random person that I have never even heard of) means that generative AI has “master[ed]” compositionality with respect to images.

I didn’t get involved in the bet in 2022 because I thought it was a stupid bet. Why? Because GenAI’s can memorize anything, and you can build a bunch of augmented data to address any specific known problem. That doesn’t mean you have a general solution.

The field of AI is littered with Band-Aid solutions that work for one problem, and not the next.

It’s no different here. It took me only few minutes to find many examples in which GPT 4o (the same model Alexander used) failed on tasks that turn on compositionality.

Here’s one: “draw five characters on a stage, from left to right, a one-armed person, a five-legged dog, a bear with three heads, a child carrying a donkey, and a doctor carrying a bicycle with no wheels” . GPT 4o failed in four consecutive tests:

In my first try, above, the man erroneously had two arms, rather than one, the bear had two heads rather than three, and the bicycle had one wheel rather than zero. Three other tries yielded similar errors, like this one, never quite returning what I asked for.

(GPT 4o also failed to accurately diagnose its own errors, misreading its own output, which represent a different kind of failure of compositionality.)

A few further minutes’ experimentation quickly revealed several other easily-elicited types of failures, mainly involving parts and wholes (which Ernie Davis and I have discussed here numerous times).

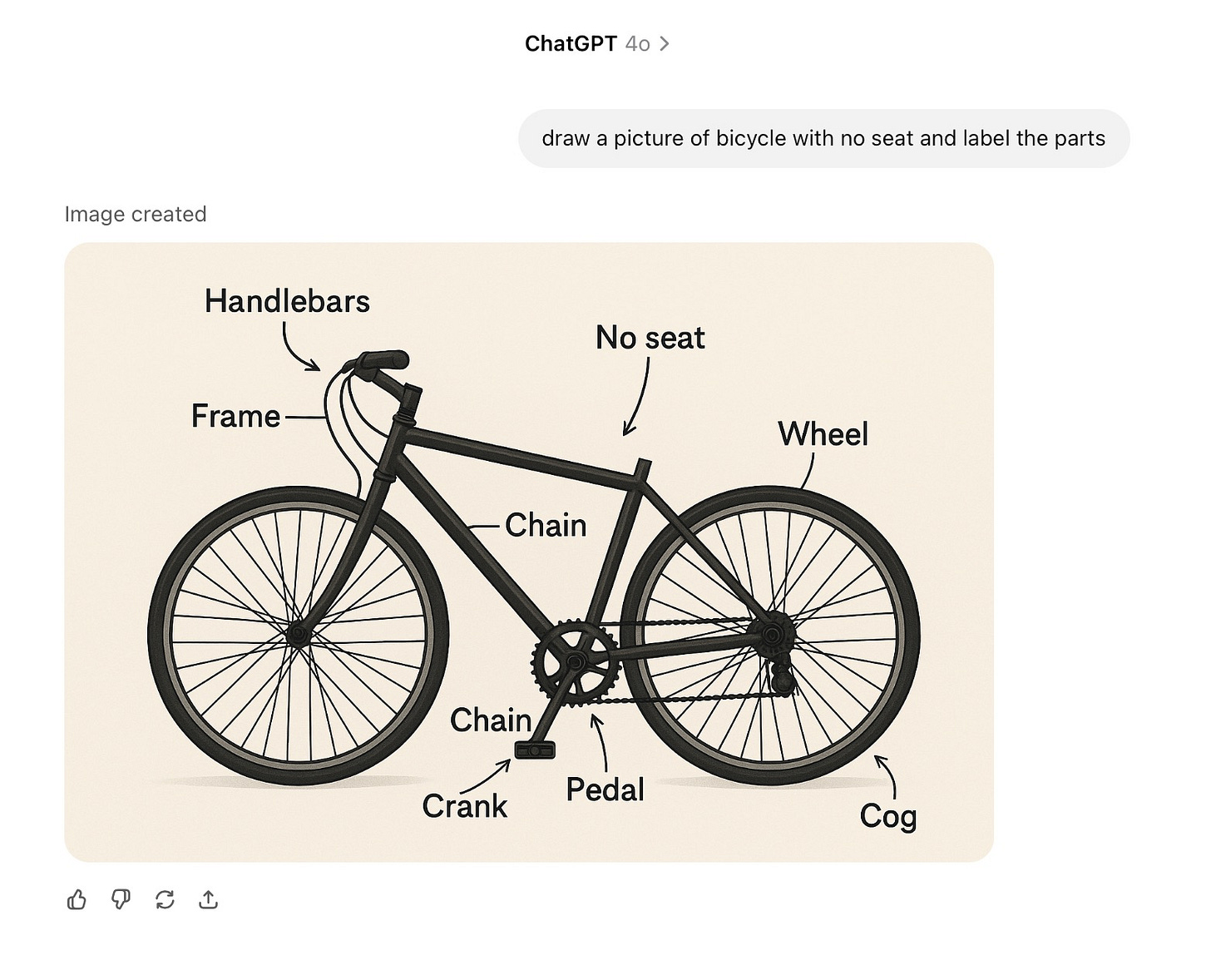

Or to take a different kind of example, similar to one I showed with o3 a couple weeks ago, labeling of parts:

My June 22, 2025 update, Image Generation: Still Crazy After All These Years to my earlier 2022 Horse Rides Astronaut was filled with more examples that show ongoing failures in compositionality, including some with the arguably better o3.

Performance on Chollet et al’s ARC-AGI 2, is also from what I understand still poor, including in a section focused on compositionality.

To be fair, GPT-4o is, in my informal testing, markedly better than DALL-E 2, e.g., correctly nailing for example “Picture of a scientist in a nightclub holding a blue frisbee with a red basketball on top”.

But it is not reasonable to conclude from the occasional success on one sort of publicly-discussed set of prompts that GPT has mastered compositionality writ large.

§

More broadly, concluding anything from specific prompts crafted and shared publicly years ago is a mistake. And not a new one either – as I pointed out to Alexander in June 2022 essay (that he read and replied to) called What does it mean when an AI fails? A Reply to SlateStarCodex’s riff on Gary Marcus. Boldface in the original:

In the end, the real question is not about the replicability of the specific strings … but about the replicability of the general phenomena.

Alexander, even after corrected three years ago, still largely confuses success on a prompt with general, broad competence at a particular ability.

This conflation is problematic for multiple reasons. One is data contamination, since any given prompt that has been circulated publicly potentially becomes part of the training data, including with custom data augmentation, especially with large armies of subcontractors. The steelman version of the argument would have considered this; Alexander didn’t address at all.

Another is that psychology can rarely be inferred directly from small samples of data, as for example cognitive psychology John R. Anderson argued in the early 1970, in part because many possible answers can be generated from multiple underlying mechanisms. Looking at a single output rarely tells you much. In the particular case, we know that the system succeeds at previously-discussed prompts but does less well on ones not previously-discussed. That tells us something about the internal mechanism, and suggests that it is far from robust. Looking only at the previously discussed prompts in isolation yields a misleading picture.

Another lesson from cognitive psychology is that anyone looking for a single test to conclude anything is probably barking up the wrong tree.

§

Overall, the form of Alexander’s argument is simply flawed:

I (Scott) made a bet with some rando in 2022 that (generative) AI would be able to perform well a on certain specific set of prompts.

Those prompts have something do with compositionality [assembling wholes from prompts]

Now AI well does on those prompts.

Therefore AI has mastered compositionality.

Concluding that success on some prompts implies overall mastery is a textbook example of the fallacy of composition, of inferring that what is true of some subset is necessarily true of the whole. It is an enormous, unjustified leap from the motte of “the systems can now solve some compositionality problems” to the bailey of “the systems have mastered compositionality”.

§

Allow me to end with an open letter:

Dear Scott,

Congratulations on winning your bet with Vitor. You are known for “steelmanning” arguments: considering the strongest possible version of an argument and trying to refute that. I wholly support this approach, but I don’t think it was best represented here. How about you take on a stronger version of the argument? Want another bet?

It took me less than an hour to find half a dozen clear counterexamples in which ChatGPT 4o failed with respect to compositionality, evident even to a teenager. When we are really at AGI it will be much harder to do so. I predict that such counterexamples will still be easy to find at the end of 2027 because of limitations inherent in LLMs.

Let’s put some more steel in your bet.

– Gary