SUNNYVALE, Calif., May 15, 2025–(BUSINESS WIRE)–Cerebras today announced the launch of Qwen3-32B, one of the most advanced open-weight models in the world, now available on the Cerebras Inference Platform. Developed by Alibaba, Qwen3-32B rivals the performance of leading closed models like GPT-4.1 and DeepSeek R1—and now, for the first time, it runs on Cerebras with real-time responsiveness.

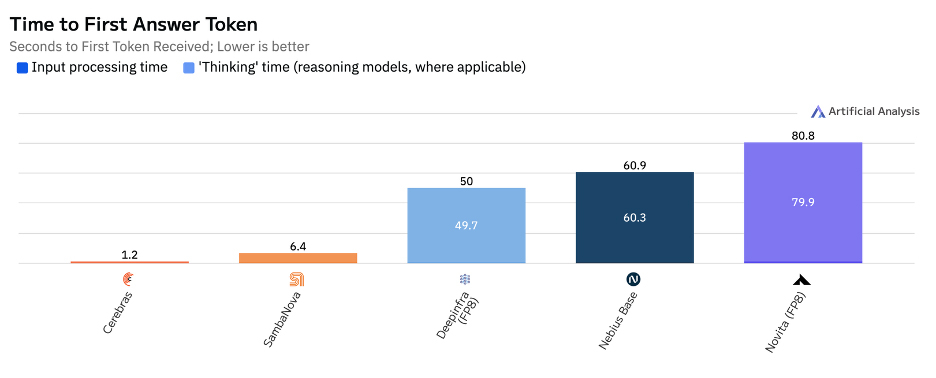

Qwen3-32B on Cerebras performs sophisticated reasoning and returns the answer in just 1.2 seconds — up to 60x faster than comparable reasoning models such as DeepSeek R1 and OpenAI o3. This is the first reasoning model on any hardware to achieve real-time reasoning. Qwen3-32B on Cerebras is the fastest reasoning model API in the world, ready to power production-grade agents, copilots, and automation workloads.

“This is the first time a world-class reasoning model—on par with DeepSeek R1 and OpenAI’s o-series — can return answers instantly,” said Andrew Feldman, CEO and co-founder of Cerebras. “It’s not just fast for a big model. It’s fast enough to reshape how real-time AI gets built.”

The First Real-Time Reasoning Model

Reasoning models are widely recognized as the most powerful class of large language models—capable of multi-step logic, tool use, and structured decision-making. But until now, they’ve come with a tradeoff: latency. Inference often takes 30–90 seconds, making them impractical for responsive user experiences.

Cerebras eliminates that bottleneck. Qwen3-32B delivers first-token latency in just one second, and completes full reasoning chains in real time. This is the only solution on the market today that combines high intelligence with real-time speed—and it’s available now.

Transparent, Scalable Pricing

Qwen3-32B is available on Cerebras with simple, production-ready pricing:

This is 10x cheaper than GPT-4.1, while offering comparable or better performance.

All developers receive 1 million free tokens per day, with no waitlist. Qwen3-32B is fully open-weight and Apache 2.0 licensed, and can be integrated in seconds using standard OpenAI- or Claude-compatible endpoints.

Qwen3-32B is live now on Cerebras.ai. For teams seeking to build fast, intelligent, production-ready AI systems, it is the most powerful open model you can use today.

About Cerebras Systems

Cerebras Systems is a team of pioneering computer architects, computer scientists, deep learning researchers, and engineers of all types. We have come together to accelerate generative AI by building from the ground up a new class of AI supercomputer. Our flagship product, the CS-3 system, is powered by the world’s largest and fastest commercially available AI processor, our Wafer-Scale Engine-3. CS-3s are quickly and easily clustered together to make the largest AI supercomputers in the world, and make placing models on the supercomputers dead simple by avoiding the complexity of distributed computing. Cerebras Inference delivers breakthrough inference speeds, empowering customers to create cutting-edge AI applications. Leading corporations, research institutions, and governments use Cerebras solutions for the development of pathbreaking proprietary models, and to train open-source models with millions of downloads. Cerebras solutions are available through the Cerebras Cloud and on premise. For further information, visit cerebras.ai or follow us on LinkedIn or X.