Temporal consistency is critical in video prediction to ensure that outputs

are coherent and free of artifacts. Traditional methods, such as temporal

attention and 3D convolution, may struggle with significant object motion and

may not capture long-range temporal dependencies in dynamic scenes. To address

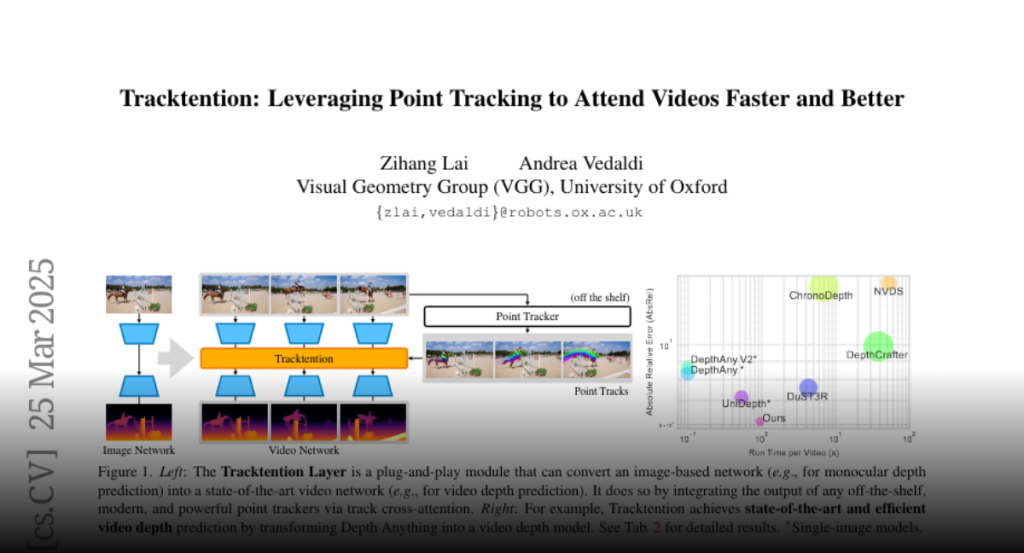

this gap, we propose the Tracktention Layer, a novel architectural component

that explicitly integrates motion information using point tracks, i.e.,

sequences of corresponding points across frames. By incorporating these motion

cues, the Tracktention Layer enhances temporal alignment and effectively

handles complex object motions, maintaining consistent feature representations

over time. Our approach is computationally efficient and can be seamlessly

integrated into existing models, such as Vision Transformers, with minimal

modification. It can be used to upgrade image-only models to state-of-the-art

video ones, sometimes outperforming models natively designed for video

prediction. We demonstrate this on video depth prediction and video

colorization, where models augmented with the Tracktention Layer exhibit

significantly improved temporal consistency compared to baselines.