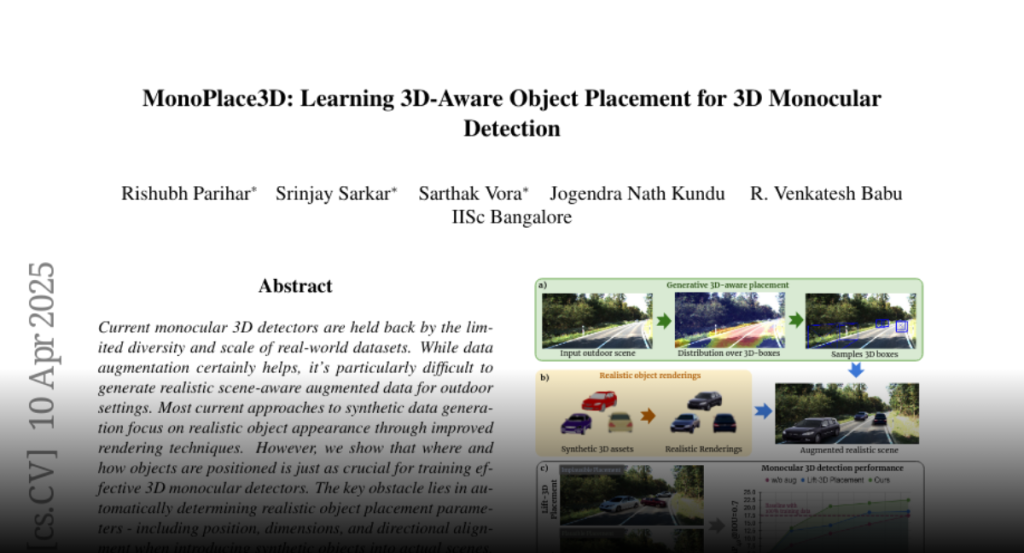

Current monocular 3D detectors are held back by the limited diversity and

scale of real-world datasets. While data augmentation certainly helps, it’s

particularly difficult to generate realistic scene-aware augmented data for

outdoor settings. Most current approaches to synthetic data generation focus on

realistic object appearance through improved rendering techniques. However, we

show that where and how objects are positioned is just as crucial for training

effective 3D monocular detectors. The key obstacle lies in automatically

determining realistic object placement parameters – including position,

dimensions, and directional alignment when introducing synthetic objects into

actual scenes. To address this, we introduce MonoPlace3D, a novel system that

considers the 3D scene content to create realistic augmentations. Specifically,

given a background scene, MonoPlace3D learns a distribution over plausible 3D

bounding boxes. Subsequently, we render realistic objects and place them

according to the locations sampled from the learned distribution. Our

comprehensive evaluation on two standard datasets KITTI and NuScenes,

demonstrates that MonoPlace3D significantly improves the accuracy of multiple

existing monocular 3D detectors while being highly data efficient.