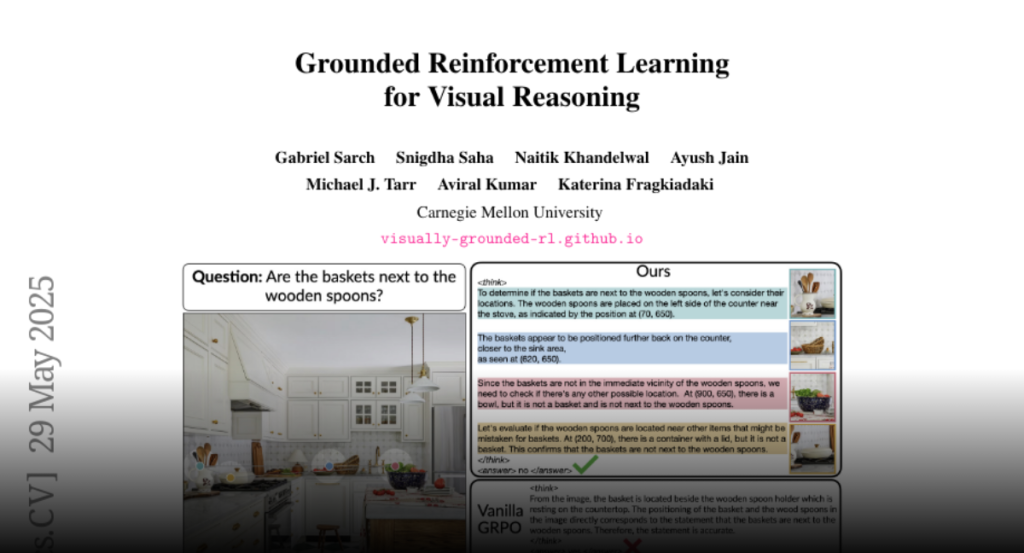

ViGoRL, a vision-language model enhanced with visually grounded reinforcement learning, achieves superior performance across various visual reasoning tasks by dynamically focusing visual attention and grounding reasoning in spatial evidence.

While reinforcement learning (RL) over chains of thought has significantly

advanced language models in tasks such as mathematics and coding, visual

reasoning introduces added complexity by requiring models to direct visual

attention, interpret perceptual inputs, and ground abstract reasoning in

spatial evidence. We introduce ViGoRL (Visually Grounded Reinforcement

Learning), a vision-language model trained with RL to explicitly anchor each

reasoning step to specific visual coordinates. Inspired by human visual

decision-making, ViGoRL learns to produce spatially grounded reasoning traces,

guiding visual attention to task-relevant regions at each step. When

fine-grained exploration is required, our novel multi-turn RL framework enables

the model to dynamically zoom into predicted coordinates as reasoning unfolds.

Across a diverse set of visual reasoning benchmarks–including SAT-2 and BLINK

for spatial reasoning, V*bench for visual search, and ScreenSpot and

VisualWebArena for web-based grounding–ViGoRL consistently outperforms both

supervised fine-tuning and conventional RL baselines that lack explicit

grounding mechanisms. Incorporating multi-turn RL with zoomed-in visual

feedback significantly improves ViGoRL’s performance on localizing small GUI

elements and visual search, achieving 86.4% on V*Bench. Additionally, we find

that grounding amplifies other visual behaviors such as region exploration,

grounded subgoal setting, and visual verification. Finally, human evaluations

show that the model’s visual references are not only spatially accurate but

also helpful for understanding model reasoning steps. Our results show that

visually grounded RL is a strong paradigm for imbuing models with

general-purpose visual reasoning.