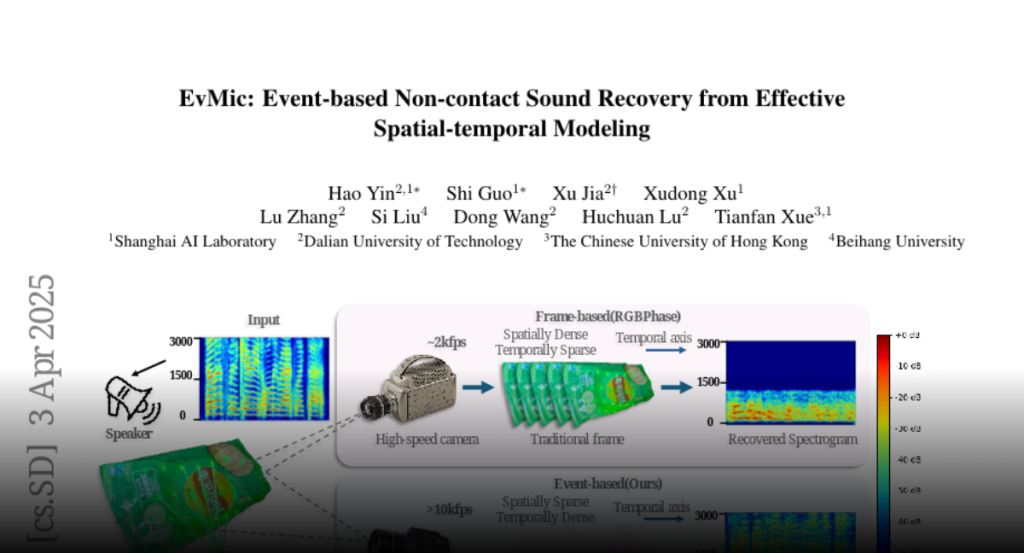

When sound waves hit an object, they induce vibrations that produce

high-frequency and subtle visual changes, which can be used for recovering the

sound. Early studies always encounter trade-offs related to sampling rate,

bandwidth, field of view, and the simplicity of the optical path. Recent

advances in event camera hardware show good potential for its application in

visual sound recovery, because of its superior ability in capturing

high-frequency signals. However, existing event-based vibration recovery

methods are still sub-optimal for sound recovery. In this work, we propose a

novel pipeline for non-contact sound recovery, fully utilizing spatial-temporal

information from the event stream. We first generate a large training set using

a novel simulation pipeline. Then we designed a network that leverages the

sparsity of events to capture spatial information and uses Mamba to model

long-term temporal information. Lastly, we train a spatial aggregation block to

aggregate information from different locations to further improve signal

quality. To capture event signals caused by sound waves, we also designed an

imaging system using a laser matrix to enhance the gradient and collected

multiple data sequences for testing. Experimental results on synthetic and

real-world data demonstrate the effectiveness of our method.