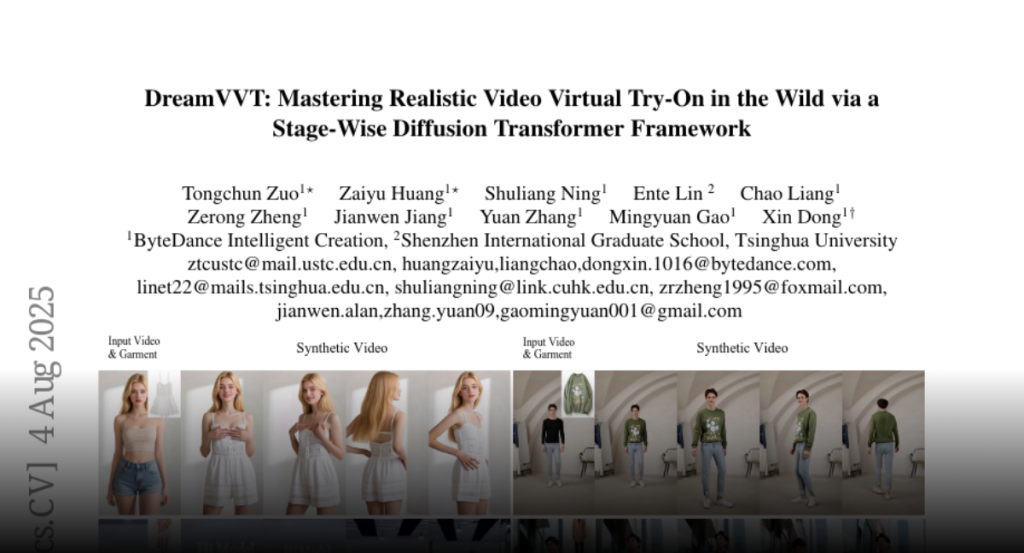

DreamVVT, a two-stage framework using Diffusion Transformers and LoRA adapters, enhances video virtual try-on by leveraging unpaired human-centric data and pretrained models to preserve garment details and temporal consistency.

Video virtual try-on (VVT) technology has garnered considerable academic

interest owing to its promising applications in e-commerce advertising and

entertainment. However, most existing end-to-end methods rely heavily on scarce

paired garment-centric datasets and fail to effectively leverage priors of

advanced visual models and test-time inputs, making it challenging to

accurately preserve fine-grained garment details and maintain temporal

consistency in unconstrained scenarios. To address these challenges, we propose

DreamVVT, a carefully designed two-stage framework built upon Diffusion

Transformers (DiTs), which is inherently capable of leveraging diverse unpaired

human-centric data to enhance adaptability in real-world scenarios. To further

leverage prior knowledge from pretrained models and test-time inputs, in the

first stage, we sample representative frames from the input video and utilize a

multi-frame try-on model integrated with a vision-language model (VLM), to

synthesize high-fidelity and semantically consistent keyframe try-on images.

These images serve as complementary appearance guidance for subsequent video

generation. In the second stage, skeleton maps together with

fine-grained motion and appearance descriptions are extracted from the input

content, and these along with the keyframe try-on images are then fed into a

pretrained video generation model enhanced with LoRA adapters. This ensures

long-term temporal coherence for unseen regions and enables highly plausible

dynamic motions. Extensive quantitative and qualitative experiments demonstrate

that DreamVVT surpasses existing methods in preserving detailed garment content

and temporal stability in real-world scenarios. Our project page

https://virtu-lab.github.io/