DetailFlow, a coarse-to-fine 1D autoregressive image generation method, improves quality and efficiency by using a novel next-detail prediction strategy, fewer tokens, and a parallel inference mechanism.

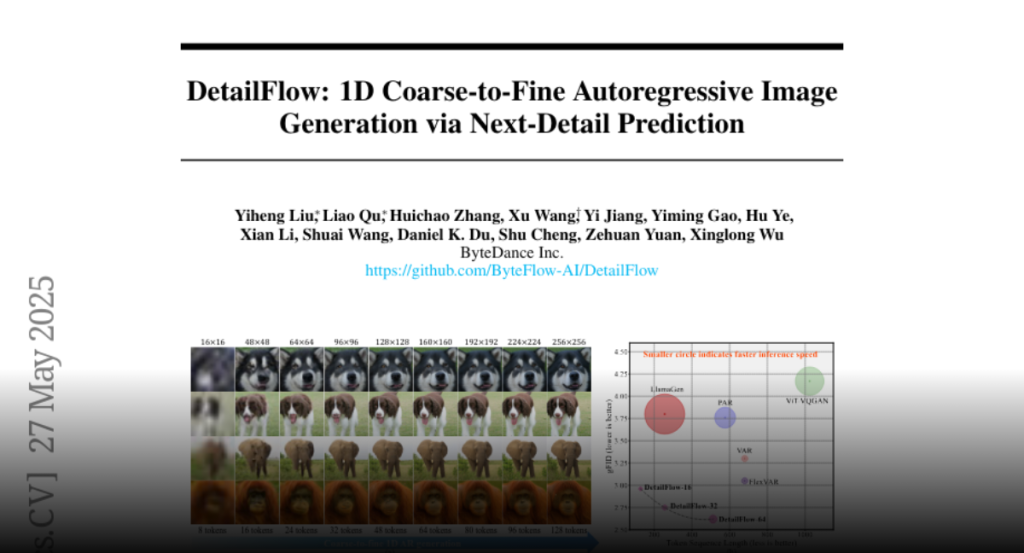

This paper presents DetailFlow, a coarse-to-fine 1D autoregressive (AR) image

generation method that models images through a novel next-detail prediction

strategy. By learning a resolution-aware token sequence supervised with

progressively degraded images, DetailFlow enables the generation process to

start from the global structure and incrementally refine details. This

coarse-to-fine 1D token sequence aligns well with the autoregressive inference

mechanism, providing a more natural and efficient way for the AR model to

generate complex visual content. Our compact 1D AR model achieves high-quality

image synthesis with significantly fewer tokens than previous approaches, i.e.

VAR/VQGAN. We further propose a parallel inference mechanism with

self-correction that accelerates generation speed by approximately 8x while

reducing accumulation sampling error inherent in teacher-forcing supervision.

On the ImageNet 256×256 benchmark, our method achieves 2.96 gFID with 128

tokens, outperforming VAR (3.3 FID) and FlexVAR (3.05 FID), which both require

680 tokens in their AR models. Moreover, due to the significantly reduced token

count and parallel inference mechanism, our method runs nearly 2x faster

inference speed compared to VAR and FlexVAR. Extensive experimental results

demonstrate DetailFlow’s superior generation quality and efficiency compared to

existing state-of-the-art methods.