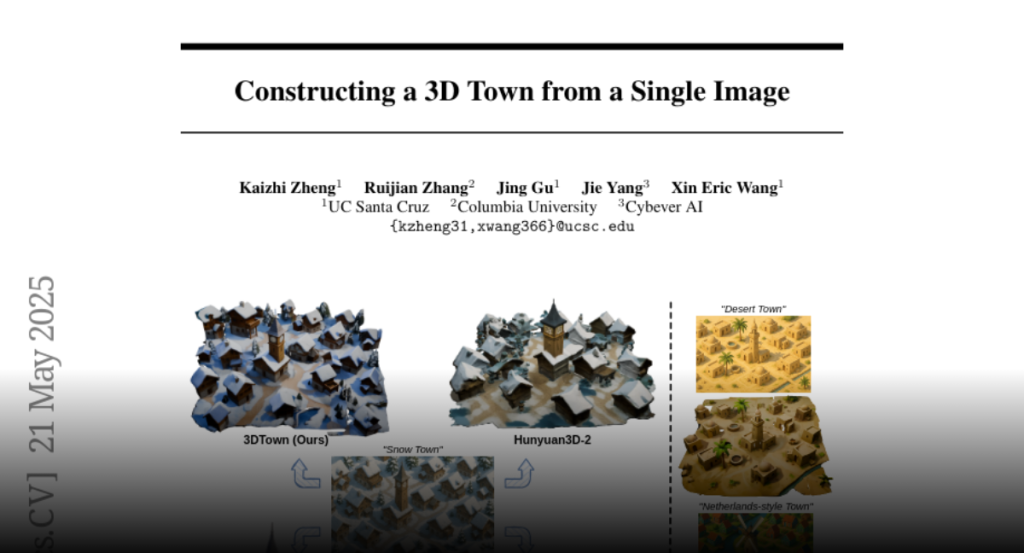

A training-free framework named 3DTown generates realistic 3D scenes from a single top-down image using region-based generation and spatial-aware 3D inpainting techniques.

Acquiring detailed 3D scenes typically demands costly equipment, multi-view

data, or labor-intensive modeling. Therefore, a lightweight alternative,

generating complex 3D scenes from a single top-down image, plays an essential

role in real-world applications. While recent 3D generative models have

achieved remarkable results at the object level, their extension to full-scene

generation often leads to inconsistent geometry, layout hallucinations, and

low-quality meshes. In this work, we introduce 3DTown, a training-free

framework designed to synthesize realistic and coherent 3D scenes from a single

top-down view. Our method is grounded in two principles: region-based

generation to improve image-to-3D alignment and resolution, and spatial-aware

3D inpainting to ensure global scene coherence and high-quality geometry

generation. Specifically, we decompose the input image into overlapping regions

and generate each using a pretrained 3D object generator, followed by a masked

rectified flow inpainting process that fills in missing geometry while

maintaining structural continuity. This modular design allows us to overcome

resolution bottlenecks and preserve spatial structure without requiring 3D

supervision or fine-tuning. Extensive experiments across diverse scenes show

that 3DTown outperforms state-of-the-art baselines, including Trellis,

Hunyuan3D-2, and TripoSG, in terms of geometry quality, spatial coherence, and

texture fidelity. Our results demonstrate that high-quality 3D town generation

is achievable from a single image using a principled, training-free approach.