Join the event trusted by enterprise leaders for nearly two decades. VB Transform brings together the people building real enterprise AI strategy. Learn more

Chinese AI startup MiniMax, perhaps best known in the West for its hit realistic AI video model Hailuo, has released its latest large language model, MiniMax-M1 — and in great news for enterprises and developers, it’s completely open source under an Apache 2.0 license, meaning businesses can take it and use it for commercial applications and modify it to their liking without restriction or payment.

M1 is an open-weight offering that sets new standards in long-context reasoning, agentic tool use, and efficient compute performance. It’s available today on the AI code sharing community Hugging Face and Microsoft’s rival code sharing community GitHub, the first release of what the company dubbed as “MiniMaxWeek” from its social account on X — with further product announcements expected.

MiniMax-M1 distinguishes itself with a context window of 1 million input tokens and up to 80,000 tokens in output, positioning it as one of the most expansive models available for long-context reasoning tasks.

The “context window” in large language models (LLMs) refers to the maximum number of tokens the model can process at one time — including both input and output. Tokens are the basic units of text, which may include entire words, parts of words, punctuation marks, or code symbols. These tokens are converted into numerical vectors that the model uses to represent and manipulate meaning through its parameters (weights and biases). They are, in essence, the LLM’s native language.

For comparison, OpenAI’s GPT-4o has a context window of only 128,000 tokens — enough to exchange about a novel’s worth of information between the user and the model in a single back and forth interaction. At 1 million tokens, MiniMax-M1 could exchange a small collection or book series’ worth of information. Google Gemini 2.5 Pro offers a token context upper limit of 1 million, as well, with a reported 2 million window in the works.

But M1 has another trick up its sleeve: it’s been trained using reinforcement learning in an innovative, resourceful, highly efficient technique. The model is trained using a hybrid Mixture-of-Experts (MoE) architecture with a lightning attention mechanism designed to reduce inference costs.

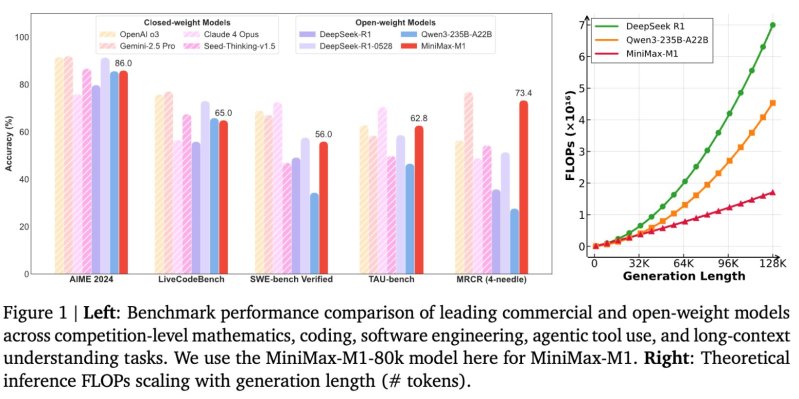

According to the technical report, MiniMax-M1 consumes only 25% of the floating point operations (FLOPs) required by DeepSeek R1 at a generation length of 100,000 tokens.

Architecture and variants

The model comes in two variants—MiniMax-M1-40k and MiniMax-M1-80k—referring to their “thinking budgets” or output lengths.

The architecture is built on the company’s earlier MiniMax-Text-01 foundation and includes 456 billion parameters, with 45.9 billion activated per token.

A standout feature of the release is the model’s training cost. MiniMax reports that the M1 model was trained using large-scale reinforcement learning (RL) at an efficiency rarely seen in this domain, with a total cost of $534,700.

This efficiency is credited to a custom RL algorithm called CISPO, which clips importance sampling weights rather than token updates, and to the hybrid attention design that helps streamline scaling.

That’s an astonishingly “cheap” amount for a frontier LLM, as DeepSeek trained its hit R1 reasoning model at a reported cost of $5-$6 million, while the training cost of OpenAIs’ GPT-4 — a more than two-year-old model now — was said to exceed $100 million. This cost comes from both the price of graphics processing units (GPUs), the massively parallel computing hardware primarily manufactured by companies like Nvidia, which can cost $20,000–$30,000 or more per module, and from the energy required to run those chips continuously in large-scale data centers.

Benchmark performance

MiniMax-M1 has been evaluated across a series of established benchmarks that test advanced reasoning, software engineering, and tool-use capabilities.

On AIME 2024, a mathematics competition benchmark, the M1-80k model scores 86.0% accuracy. It also delivers strong performance in coding and long-context tasks, achieving:

65.0% on LiveCodeBench

56.0% on SWE-bench Verified

62.8% on TAU-bench

73.4% on OpenAI MRCR (4-needle version)

These results place MiniMax-M1 ahead of other open-weight competitors such as DeepSeek-R1 and Qwen3-235B-A22B on several complex tasks.

While closed-weight models like OpenAI’s o3 and Gemini 2.5 Pro still top some benchmarks, MiniMax-M1 narrows the performance gap considerably while remaining freely accessible under an Apache-2.0 license.

For deployment, MiniMax recommends vLLM as the serving backend, citing its optimization for large model workloads, memory efficiency, and batch request handling. The company also provides deployment options using the Transformers library.

MiniMax-M1 includes structured function calling capabilities and is packaged with a chatbot API featuring online search, video and image generation, speech synthesis, and voice cloning tools. These features aim to support broader agentic behavior in real-world applications.

Implications for technical decision-makers and enterprise buyers

MiniMax-M1’s open access, long-context capabilities, and compute efficiency address several recurring challenges for technical professionals responsible for managing AI systems at scale.

For engineering leads responsible for the full lifecycle of LLMs — such as optimizing model performance and deploying under tight timelines — MiniMax-M1 offers a lower operational cost profile while supporting advanced reasoning tasks. Its long context window could significantly reduce preprocessing efforts for enterprise documents or log data that span tens or hundreds of thousands of tokens.

For those managing AI orchestration pipelines, the ability to fine-tune and deploy MiniMax-M1 using established tools like vLLM or Transformers supports easier integration into existing infrastructure. The hybrid-attention architecture may help simplify scaling strategies, and the model’s competitive performance on multi-step reasoning and software engineering benchmarks offers a high-capability base for internal copilots or agent-based systems.

From a data platform perspective, teams responsible for maintaining efficient, scalable infrastructure can benefit from M1’s support for structured function calling and its compatibility with automated pipelines. Its open-source nature allows teams to tailor performance to their stack without vendor lock-in.

Security leads may also find value in evaluating M1’s potential for secure, on-premises deployment of a high-capability model that doesn’t rely on transmitting sensitive data to third-party endpoints.

Taken together, MiniMax-M1 presents a flexible option for organizations looking to experiment with or scale up advanced AI capabilities while managing costs, staying within operational limits, and avoiding proprietary constraints.

The release signals MiniMax’s continued focus on practical, scalable AI models. By combining open access with advanced architecture and compute efficiency, MiniMax-M1 may serve as a foundational model for developers building next-generation applications that require both reasoning depth and long-range input understanding.

We’ll be tracking MiniMax’s other releases throughout the week. Stay tuned!