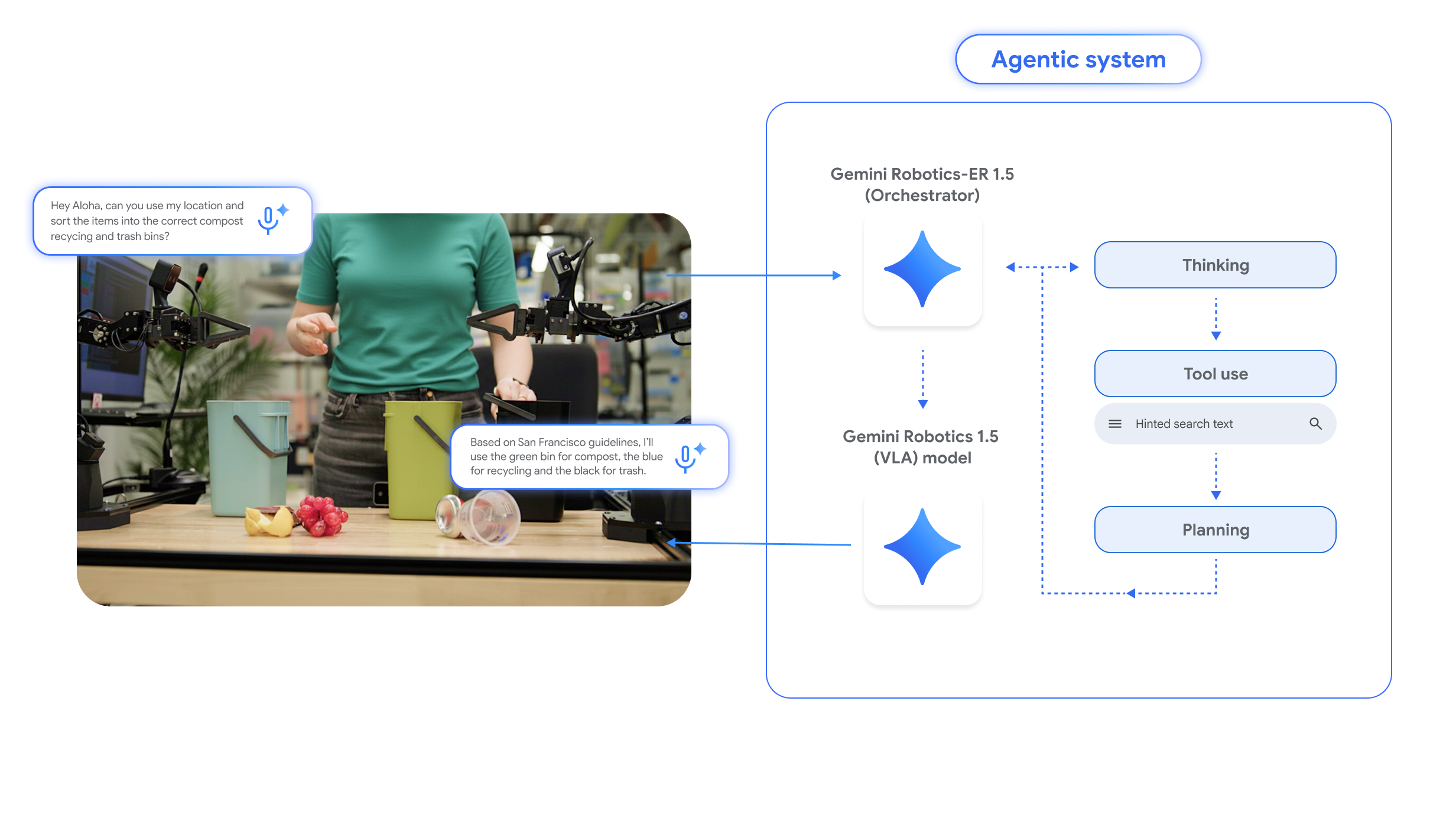

Imagine that you want a robot to sort a pile of laundry into whites and colors. Gemini Robotics-ER 1.5 would process the request along with images of the physical environment (a pile of clothing). This AI can also call tools like Google search to gather more data. The ER model then generates natural language instructions, specific steps that the robot should follow to complete the given task.

Credit:

Google

The two new models work together to “think” about how to complete a task.

Credit:

Gemini Robotics 1.5 (the action model) takes these instructions from the ER model and generates robot actions while using visual input to guide its movements. But it also goes through its own thinking process to consider how to approach each step. “There are all these kinds of intuitive thoughts that help [a person] guide this task, but robots don’t have this intuition,” said DeepMind’s Kanishka Rao. “One of the major advancements that we’ve made with 1.5 in the VLA is its ability to think before it acts.”

Both of DeepMind’s new robotic AIs are built on the Gemini foundation models but have been fine-tuned with data that adapts them to operating in a physical space. This approach, the team says, gives robots the ability to undertake more complex multi-stage tasks, bringing agentic capabilities to robotics.

The DeepMind team tests Gemini robotics with a few different machines, like the two-armed Aloha 2 and the humanoid Apollo. In the past, AI researchers had to create customized models for each robot, but that’s no longer necessary. DeepMind says that Gemini Robotics 1.5 can learn across different embodiments, transferring skills learned from Aloha 2’s grippers to the more intricate hands on Apollo with no specialized tuning.

All this talk of physical agents powered by AI is fun, but we’re still a long way from a robot you can order to do your laundry. Gemini Robotics 1.5, the model that actually controls robots, is still only available to trusted testers. However, the thinking ER model is now rolling out in Google AI Studio, allowing developers to generate robotic instructions for their own physically embodied robotic experiments.