This post was co-written with Nick Frichette and Vijay George from Datadog.

As organizations increasingly adopt Amazon Bedrock for generative AI applications, protecting against misconfigurations that could lead to data leaks or unauthorized model access becomes critical. The AWS Generative AI Adoption Index, which surveyed 3,739 senior IT decision-makers across nine countries, revealed that 45% of organizations selected generative AI tools as their top budget priority in 2025. As more AWS and Datadog customers accelerate their adoption of AI, building AI security into existing processes will become essential, especially as more stringent regulations emerge. But looking at AI risks in a silo isn’t enough; AI risks must be contextualized alongside other risks such as identity exposures and misconfigurations. The combination of Amazon Bedrock and Datadog’s comprehensive security monitoring helps organizations innovate faster while maintaining robust security controls.

Amazon Bedrock delivers enterprise-grade security by incorporating built-in protections across data privacy, access controls, network security, compliance, and responsible AI safeguards. Customer data is encrypted both in transit using TLS 1.2 or above and at rest with AWS Key Management Service (AWS KMS), and organizations have full control over encryption keys. Data privacy is central: your input, prompts, and outputs are not shared with model providers nor used to train or improve foundation models (FMs). Fine-tuning and customizations occur on private copies of models, providing data confidentiality. Access is tightly governed through AWS Identity and Access Management (IAM) and resource-based policies, supporting granular authorization for users and roles. Amazon Bedrock integrates with AWS PrivateLink and supports virtual private cloud (VPC) endpoints for private, internal communication, so traffic doesn’t leave the Amazon network. The service complies with key industry standards such as ISO, SOC, CSA STAR, HIPAA eligibility, GDPR, and FedRAMP High, making it suitable for regulated industries. Additionally, Amazon Bedrock includes configurable guardrails to filter sensitive or harmful content and promote responsible AI use. Security is structured under the AWS Shared Responsibility Model, where AWS manages infrastructure security and customers are responsible for secure configurations and access controls within their Amazon Bedrock environment.

Building on these robust AWS security features, Datadog and AWS have partnered to provide a holistic view of AI infrastructure risks, vulnerabilities, sensitive data exposure, and other misconfigurations. Datadog Cloud Security employs both agentless and agent-based scanning to help organizations identify, prioritize, and remediate risks across cloud resources. This integration helps AWS users prioritize risks based on business criticality, with security findings enriched by observability data, thereby enhancing their overall security posture in AI implementations.

We’re excited to announce new security capabilities in Datadog Cloud Security that can help you detect and remediate Amazon Bedrock misconfigurations before they become security incidents. This integration helps organizations embed robust security controls and secure their use of the powerful capabilities of Amazon Bedrock by offering three critical advantages: holistic AI security by integrating AI security into your broader cloud security strategy, real-time risk detection through identifying potential AI-related security issues as they emerge, and simplified compliance to help meet evolving AI regulations with pre-built detections.

AWS and Datadog: Empowering customers to adopt AI securely

The partnership between AWS and Datadog is focused on helping customers operate their cloud infrastructure securely and efficiently. As organizations rapidly adopt AI technologies, extending this partnership to include Amazon Bedrock is a natural evolution. Amazon Bedrock is a fully managed service that makes high-performing FMs from leading AI companies and Amazon available through a unified API, making it an ideal starting point for Datadog’s AI security capabilities.

The decision to prioritize Amazon Bedrock integration is driven by several factors, including strong customer demand, comprehensive security needs, and the existing integration foundation. With over 900 integrations and a partner-built Marketplace, Datadog’s long-standing partnership with AWS and deep integration capabilities have helped Datadog quickly develop comprehensive security monitoring for Amazon Bedrock while using their existing cloud security expertise.

Throughout Q4 2024, Datadog Security Research observed increasing threat actor interest in cloud AI environments, making this integration particularly timely. By combining the powerful AI capabilities of AWS with Datadog’s security expertise, you can safely accelerate your AI adoption while maintaining robust security controls.

How Datadog Cloud Security helps secure Amazon Bedrock resources

After adding the AWS integration to your Datadog account and enabling Datadog Cloud Security, Datadog Cloud Security continuously monitors your AWS environment, identifying misconfigurations, identity risks, vulnerabilities, and compliance violations. These detections use the Datadog Severity Scoring system to prioritize them based on infrastructure context. The scoring considers a variety of variables, including if the resource is in production, is publicly accessible, or has access to sensitive data. This multi-layer analysis can help you reduce noise and focus your attention to the most critical misconfigurations by considering runtime behavior.

Partnering with AWS, Datadog is excited to offer detections for Datadog Cloud Security customers, such as:

Amazon Bedrock custom models should not output model data to publicly accessible S3 buckets

Amazon Bedrock custom models should not train from publicly writable S3 buckets

Amazon Bedrock guardrails should have a prompt attack filter enabled and block prompt attacks at high sensitivity

Amazon Bedrock agent guardrails should have the sensitive information filter enabled and block highly sensitive PII entities

Detect AI misconfigurations with Datadog Cloud Security

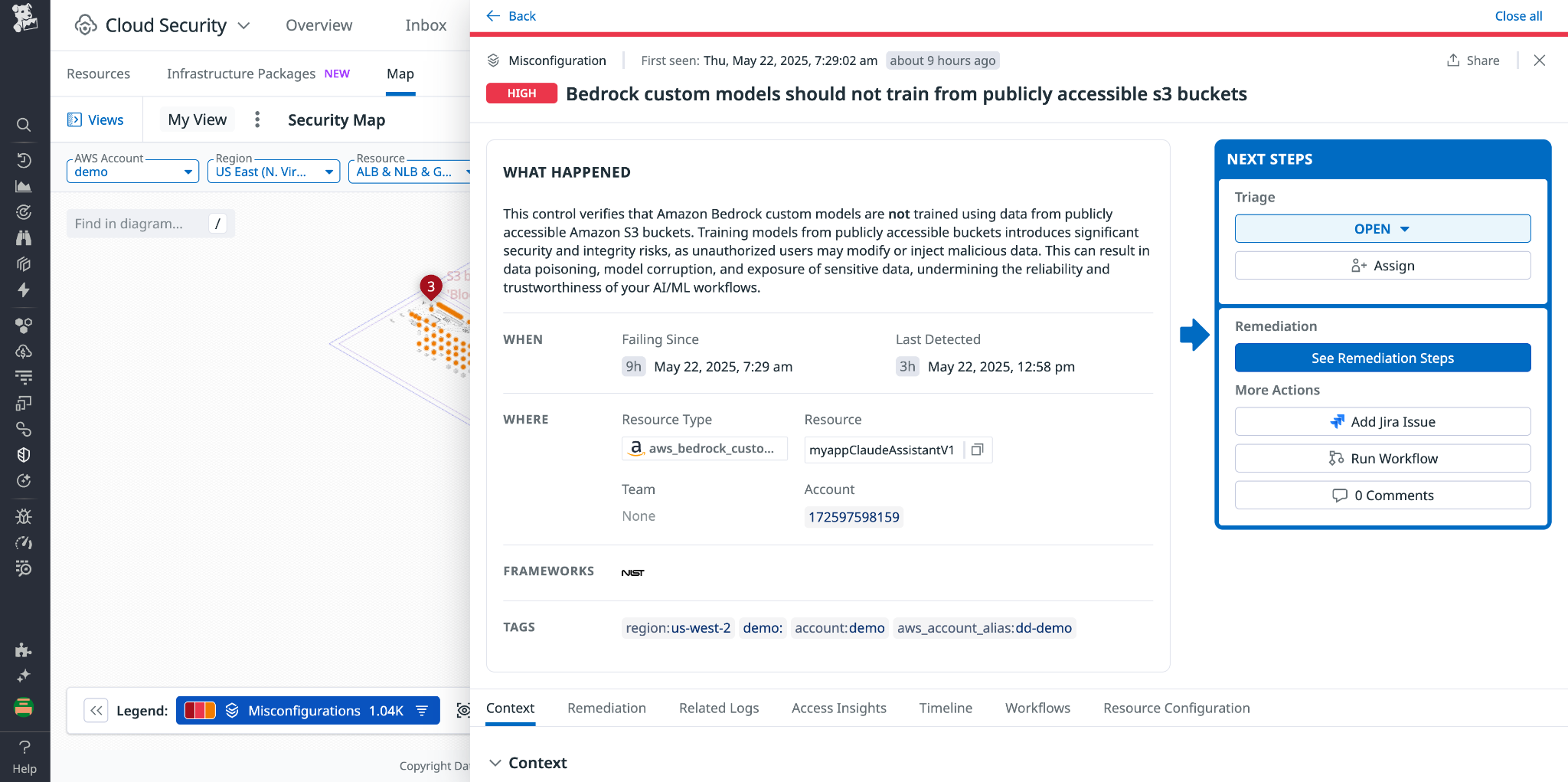

To understand how these detections can help secure your Amazon Bedrock infrastructure, let’s look at a specific use case, in which Amazon Bedrock custom models should not train from publicly writable Amazon Simple Storage Service (Amazon S3) buckets.

With Amazon Bedrock, you can customize AI models by fine-tuning on domain specific data. To do this, that data is stored in an S3 bucket. Threat actors are constantly evaluating the configuration of S3 buckets, looking for the potential to access sensitive data or even the ability to write to S3 buckets.

If a threat actor finds an S3 bucket that was misconfigured to permit public write access, and that same bucket contained data that was used to train an AI model, a bad actor could poison that dataset and introduce malicious behavior or output to the model. This is known as a data poisoning attack.

Normally, detecting these types of misconfigurations requires multiple steps: one to identify the S3 bucket misconfigured with write access, and one to identify that the bucket is being used by Amazon Bedrock. With Datadog Cloud Security, this detection is one of hundreds that are activated out of the box.

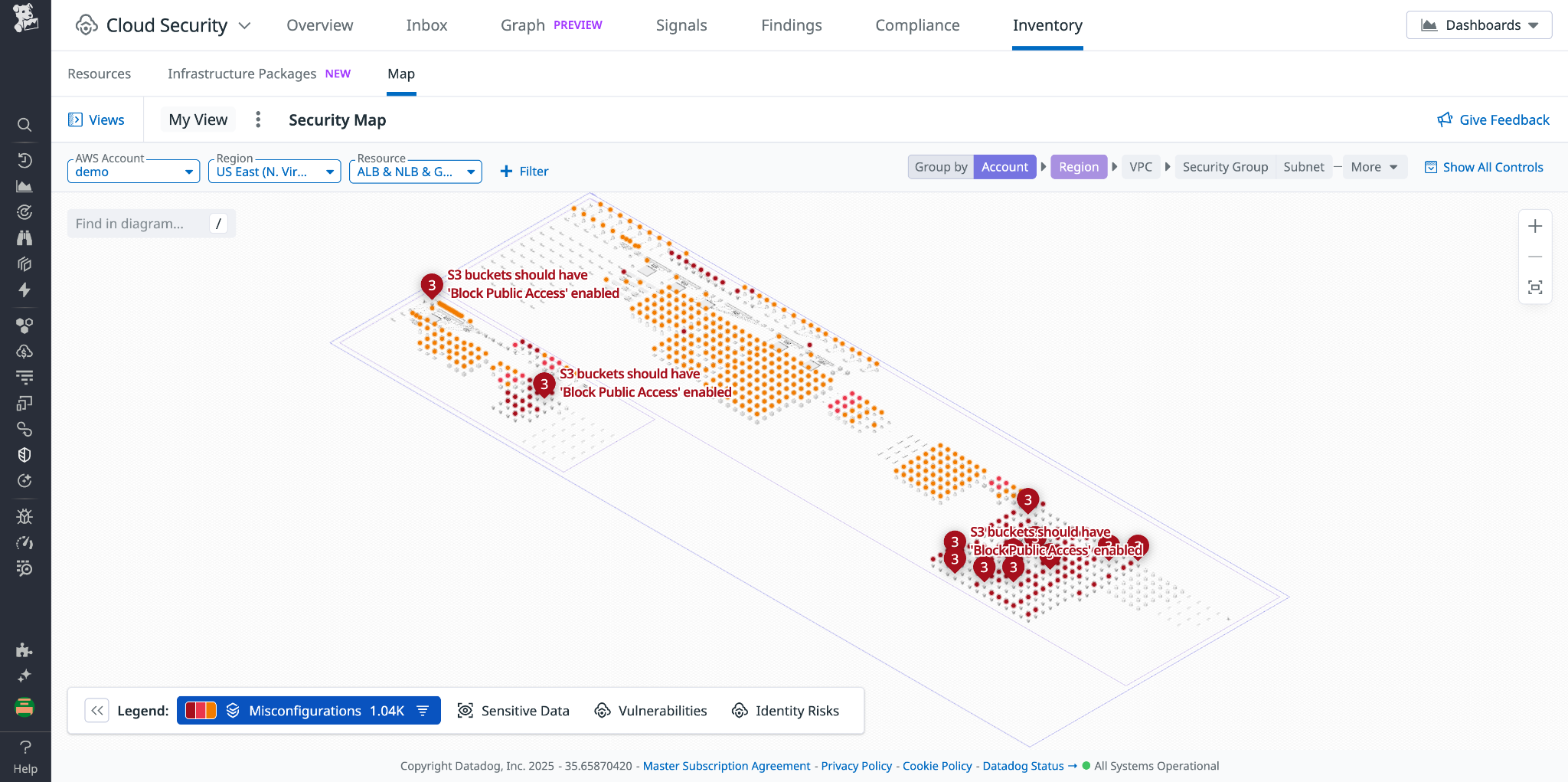

In the Datadog Cloud Security system, you can view this issue alongside surrounding infrastructure using Cloud Map. This provides live diagrams of your cloud architecture, as shown in the following screenshot. AI risks are then contextualized alongside sensitive data exposure, identity risks, vulnerabilities, and other misconfigurations to give you a 360-view of risks.

For example, you might see that your application is using Anthropic’s Claude 3.7 on Amazon Bedrock and accessing training or prompt data stored in an S3 bucket that also has public write access. This could inadvertently impact model integrity by introducing unapproved data to the large language model (LLM), so you will want to update this configuration. Though basic, the first step for most security initiatives is identifying the issue. With agentless scanning, Datadog scans your AWS environment at intervals between 15 minutes and 2 hours, so users can identify misconfigurations as they are introduced to their environment. The next step is to remediate this risk. Datadog Cloud Security offers automatically generated remediation guidance, specifically for each risk (see the following screenshot). You will get a step-by-step explanation of how to fix each finding. In this situation, we can remediate this issue by modifying the S3 bucket’s policy, helping prevent public write access. You can do this directly in AWS, create a JIRA ticket, or use the built-in workflow automation tools. From here, you can apply remediation steps directly within Datadog and confirm that the misconfiguration has been resolved.

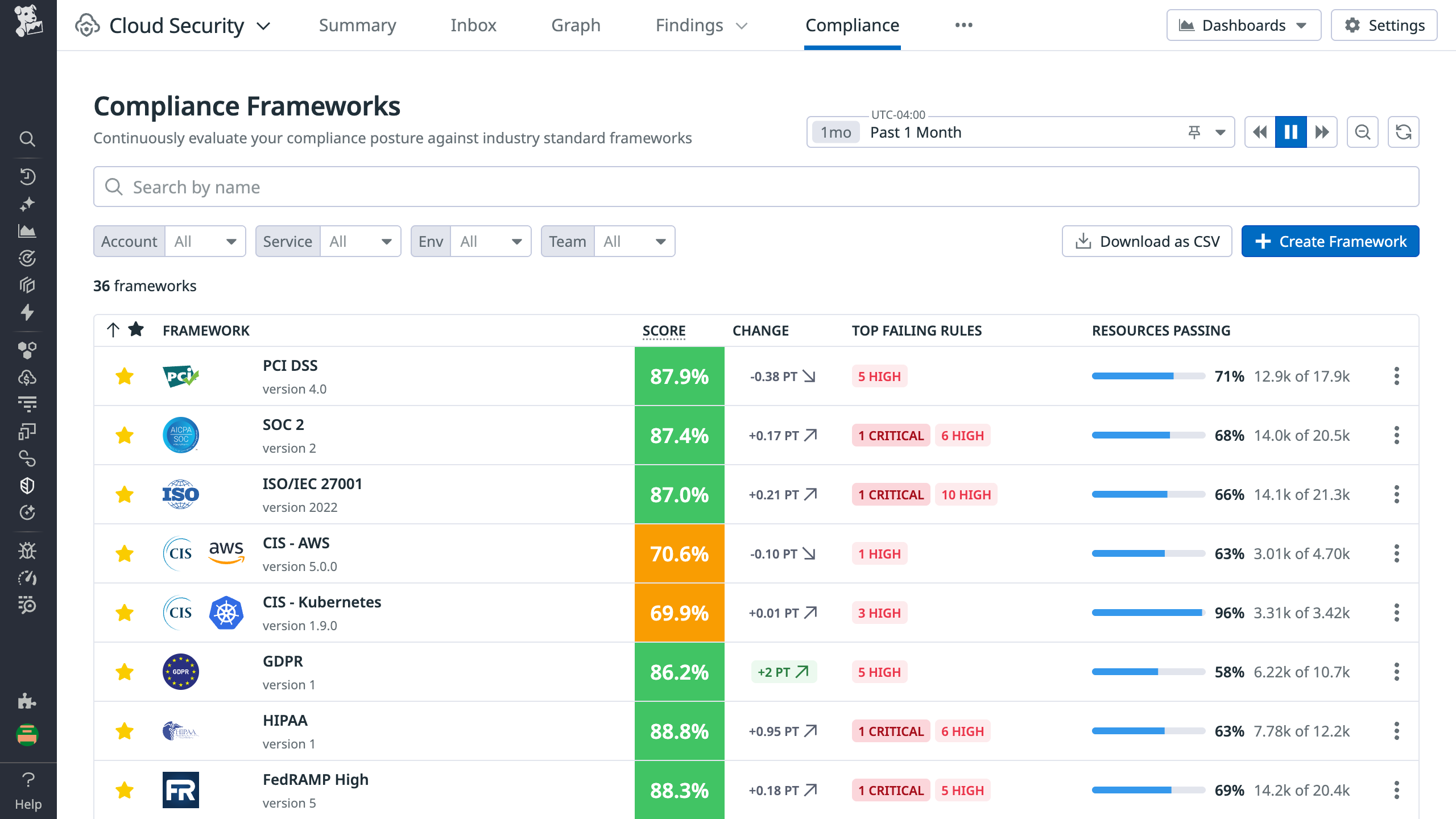

Resolving this issue will positively impact your compliance posture, as illustrated by the posture score in Datadog Cloud Security, helping teams meet internal benchmarks and regulatory standards. Teams can also create custom frameworks or iterate on existing ones for tailored compliance controls.

As generative AI is embraced across industries, the regulatory environment will evolve. Datadog will continue partnering with AWS to expand their detection library and support secure AI adoption and compliance.

How Datadog Cloud Security detects misconfigurations in your cloud environment

You can deploy Datadog Cloud Security either with the Datadog agent, agentlessly, or both to maximize security coverage in your cloud environment. Datadog customers can start monitoring their AWS accounts for misconfigurations by first adding the AWS integration to Datadog. This enables Datadog to crawl cloud resources in customer AWS accounts.

As the Datadog system finds resources, it runs through a catalog of hundreds of out-of-the-box detection rules against these resources, looking for misconfigurations and threat paths that adversaries can exploit.

Secure your AI infrastructure with Datadog

Misconfigurations in AI systems can be risky, but with the right tools, you can have the visibility and context needed to manage them. With Datadog Cloud Security, teams gain visibility into these risks, detect threats early, and remediate issues with confidence. In addition, Datadog has also released numerous agentic AI security features, designed to help teams gain visibility into the health and security of critical AI workload, which includes new announcements made to Datadog’s LLM observability features.

Lastly, Datadog announced Bits AI Security Analyst alongside other Bits AI agents at DASH. Included as part of Cloud SIEM, Bits is an agentic AI security analyst that automates triage for AWS CloudTrail signals. Bits investigates each alert like a seasoned analyst: pulling in relevant context from across your Datadog environment, annotating key findings, and offering a clear recommendation on whether the signal is likely benign or malicious. By accelerating triage and surfacing real threats faster, Bits helps reduce mean time to remediation (MTTR) and frees analysts to focus on important threat hunting and response initiatives. This helps across different threats, including AI-related threats.

To learn more about how Datadog helps secure your AI infrastructure, see Monitor Amazon Bedrock with Datadog or check out our security documentation. If you’re not already using Datadog, you can get started with Datadog Cloud Security with a 14-day free trial.

About the Authors

Nina Chen is a Customer Solutions Manager at AWS specializing in leading software companies to use the power of the AWS Cloud to accelerate their product innovation and growth. With over 4 years of experience working in the strategic independent software vendor (ISV) vertical, Nina enjoys guiding ISV partners through their cloud transformation journeys, helping them optimize their cloud infrastructure, driving product innovation, and delivering exceptional customer experiences.

Nina Chen is a Customer Solutions Manager at AWS specializing in leading software companies to use the power of the AWS Cloud to accelerate their product innovation and growth. With over 4 years of experience working in the strategic independent software vendor (ISV) vertical, Nina enjoys guiding ISV partners through their cloud transformation journeys, helping them optimize their cloud infrastructure, driving product innovation, and delivering exceptional customer experiences.

Sujatha Kuppuraju is a Principal Solutions Architect at AWS, specializing in cloud and generative AI security. She collaborates with software companies’ leadership teams to architect secure, scalable solutions on AWS and guide strategic product development. Using her expertise in cloud architecture and emerging technologies, Sujatha helps organizations optimize offerings, maintain robust security, and bring innovative products to market in an evolving tech landscape.

Sujatha Kuppuraju is a Principal Solutions Architect at AWS, specializing in cloud and generative AI security. She collaborates with software companies’ leadership teams to architect secure, scalable solutions on AWS and guide strategic product development. Using her expertise in cloud architecture and emerging technologies, Sujatha helps organizations optimize offerings, maintain robust security, and bring innovative products to market in an evolving tech landscape.

Nick Frichette is a Staff Security Researcher for Cloud Security Research at Datadog.

Nick Frichette is a Staff Security Researcher for Cloud Security Research at Datadog.

Vijay George is a Product Manager for AI Security at Datadog.

Vijay George is a Product Manager for AI Security at Datadog.