Anthropic, the AI safety startup behind the Claude chatbot, has quietly redrawn its privacy boundaries. Starting this month, all consumer users of Claude from the free tier to premium subscriptions like Claude Pro and Max are being asked to make a choice: either allow their conversations to be used to train future AI models, or opt out and continue under stricter limits.

The change represents one of the most significant updates to Anthropic’s data policies since Claude’s launch. It also highlights a growing tension in the AI industry, companies need enormous volumes of conversational data to improve their models, but users are increasingly cautious about handing over their words, ideas, and code to corporate servers.

Also read: How xAI’s new coding model works and what it means for developers

What’s different this time

Until now, Claude’s consumer chats were automatically deleted after 30 days unless flagged for abuse, in which case they could be stored for up to two years. With the new policy, opting in means your conversations, including coding sessions, may be retained for up to five years.

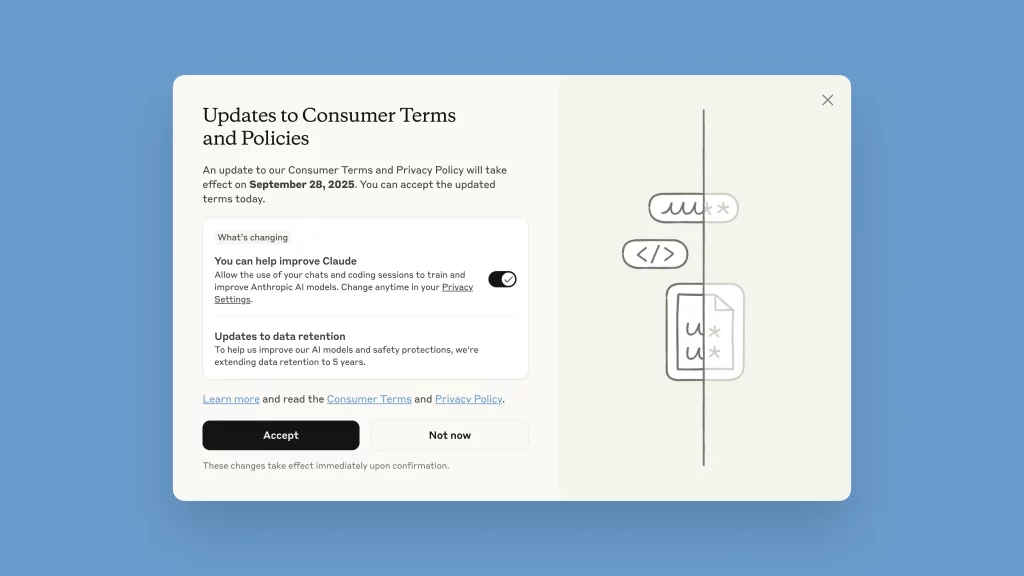

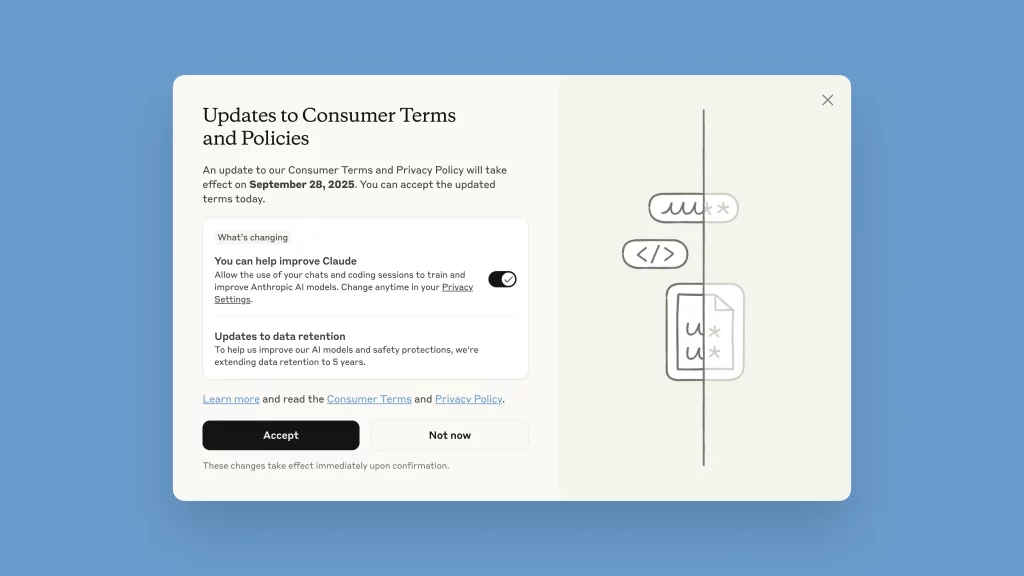

When users log into Claude today, they see a large popup titled “Updates to Consumer Terms and Policies.” At first glance, the layout looks straightforward: a bold “Accept” button dominates the screen. But the crucial toggle that determines whether your data feeds into Claude’s training pipeline is smaller, placed beneath, and switched on by default.

New users face the same screen during signup. Anthropic insists that data use is optional and reversible in settings, but once conversations are included in training, they cannot be withdrawn retroactively.

Notably, this policy shift applies only to consumer-facing products like Claude Free, Pro, and Max. Enterprise services such as Claude for Work, Claude Gov, Claude Education, and API-based integrations on Amazon Bedrock or Google Cloud remain unaffected.

Why it matters

The company has framed this as a way to give users meaningful choice while advancing Claude’s capabilities. In a blog post, Anthropic argued that user data is essential for improving reasoning, coding accuracy, and safety systems. Sensitive information, the company says, is automatically filtered out, conversations are encrypted, and no data is sold to third parties.

For Anthropic, which has marketed itself as a “safety-first” lab, the update is also about credibility: making Claude better at understanding real-world use cases without compromising trust.

Not everyone is convinced. Privacy advocates and user communities, especially on forums like Reddit, have raised alarms over what they call “dark patterns” in the interface design, a default-on toggle that nudges people into sharing data without truly informed consent.

Also read: Anthropic updates Claude AI, gives users control over data-sharing for the first time

The debate has even spilled into Anthropic’s competitive landscape. Brad Lightcap, Chief Operating Officer at OpenAI, has previously criticized similar data policies as “a sweeping and unnecessary demand” that “fundamentally conflicts with the privacy commitments we have made to our users.”

While Lightcap was responding to a different set of obligations, his comments resonate with concerns now directed at Anthropic: that AI firms, despite public pledges of safety and restraint, are inevitably pulled toward the same hunger for data that drives their rivals.

The stakes for users

For everyday Claude users, the implications are immediate. By September 28, 2025, users must either accept the new terms or risk losing access, conversations that remain untouched won’t be used, but if reopened, they fall under the new rules. Data sharing can be disabled anytime in settings, though only future conversations are protected, once training data is absorbed into Claude’s models, it cannot be retrieved.

Anthropic built its brand by promising to tread cautiously in the race toward advanced AI. But this policy shift suggests the company is grappling with the same competitive pressures as its peers. Data is the fuel of large language models, and without enough of it, even a well-funded lab risks falling behind.

The question is whether users will accept this trade-off: more powerful AI assistants at the cost of longer, deeper data retention.

As one analyst put it, the optics may matter as much as the policy itself: “Anthropic sold itself as the company that wouldn’t play by Silicon Valley’s rules. This move makes it look like every other AI giant.”

The Claude privacy update is more than just fine print. It’s a signal of how AI companies are converging on a new normal: opt-out systems, long retention windows, and user data at the core of product evolution.

For users, the choice is clear but consequential. Opt in, and your words help shape the next generation of AI. Opt out, and you keep a measure of privacy but perhaps at the expense of slower progress for the tools you rely on.

Either way, September 28 marks a new chapter for Anthropic and its users, one where transparency, trust, and technology collide in real time.

Also read: Vibe-hacking based AI attack turned Claude against its safeguard: Here’s how