Straight up: the hypesters are winning.

Take hallucinations, a deep, serious, unsolved problem with Generative AI, which I have been warning about since 2001. (That’s not a typo; it’s been nearly a quarter century.)

Media cheerleaders and industry people are trying to convince you that AGI is here, or imminent, and that hallucinations have gone away or are exceedingly rare.

Bullshit. Hallucinations are still here, and aren’t going away, anytime soon. LLMs still can’t sanity check their own work or still purely to known sources, or notify when they have invented something bogus.

But somehow a lot of intelligent people still haven’t gotten the message.

Take lawyers. A lot of lawyers are using tools like ChatGPT to prepare briefs, and many still seem shocked when it makes up cases.

I’ve given a few examples here over the years, going back to June 2023 or so; I mentioned some in Taming Silicon Valley. (The case above, new to me, was reported in The Guardian, today.).

It’s a hardly secret by now. But the problem hasn’t remotely gone away. The last time I posted an example, on X, a week or two ago some dude told me it must be some sort of anomaly. It’s not.

It’s a routine occurrence.

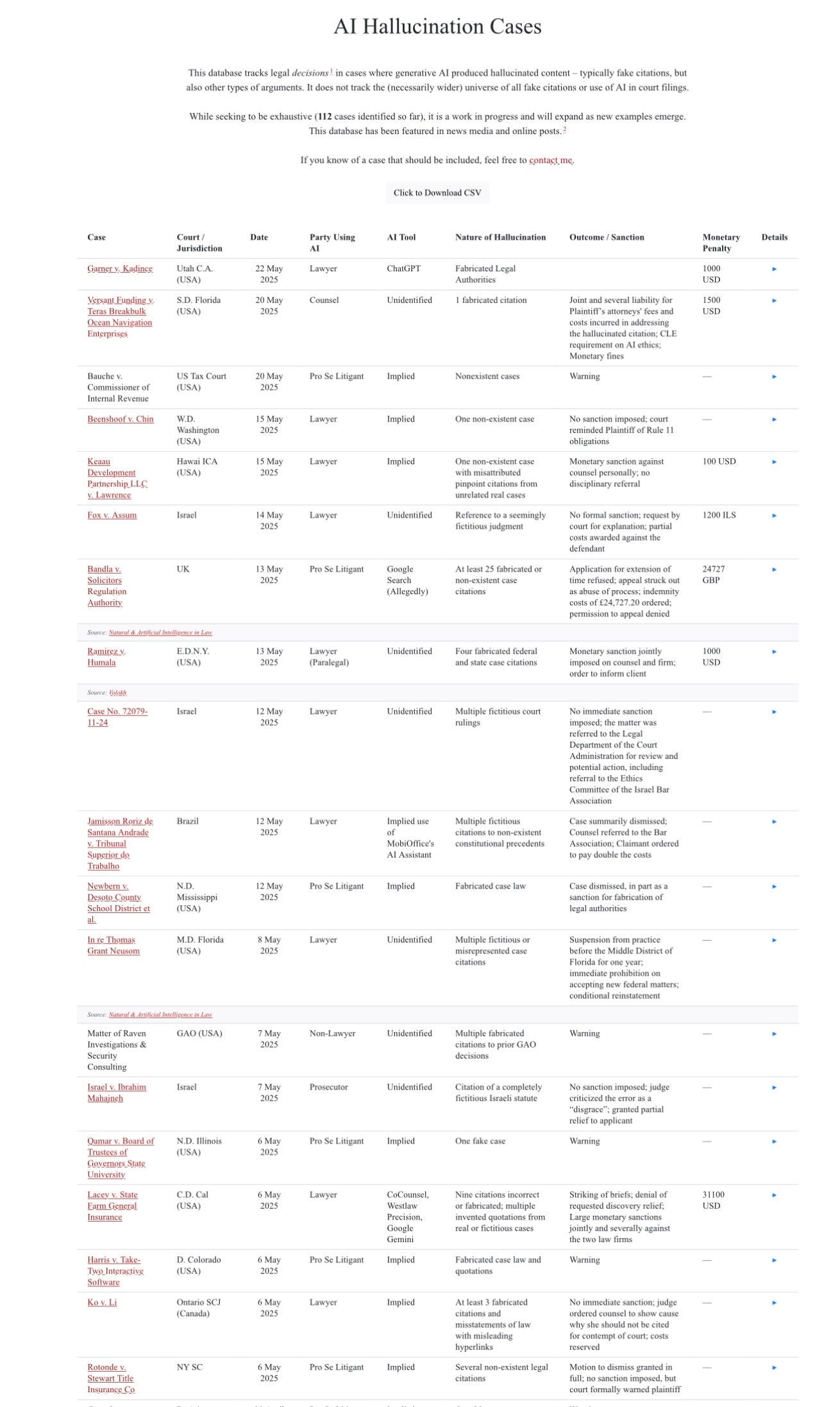

Here are a bunch of examples, from a database compiled by Research Fellow Damien Charlotin:

Look carefully. All those examples are just this month.

And these are just the folks that got caught and that were publicized – probably a tiny fraction of the overall incidence. Many judges probably don’t notice, or don’t make their concerns public. (We have no idea how many decisions were influenced by fake citations that were not noticed.)

In all, Charlotin’s database lists 112 cases, for an average of a bit less than one publicly reported case a week since ChatGPT become popular. And if May is representative, the problem is getting worse.

If educated people like lawyers still haven’t gotten the message that LLMs hallucinate, and for that matter that they can be fined or publicly humiliated if they rely on LLMs, something is fundamentally wrong.

The media can and should do more to alert people to the shortcomings of these systems. Not just when they happen, but how regularly this keeps on happening. Those in the media who have downplayed hallucinations and oversold current AI have done the public a disservice.