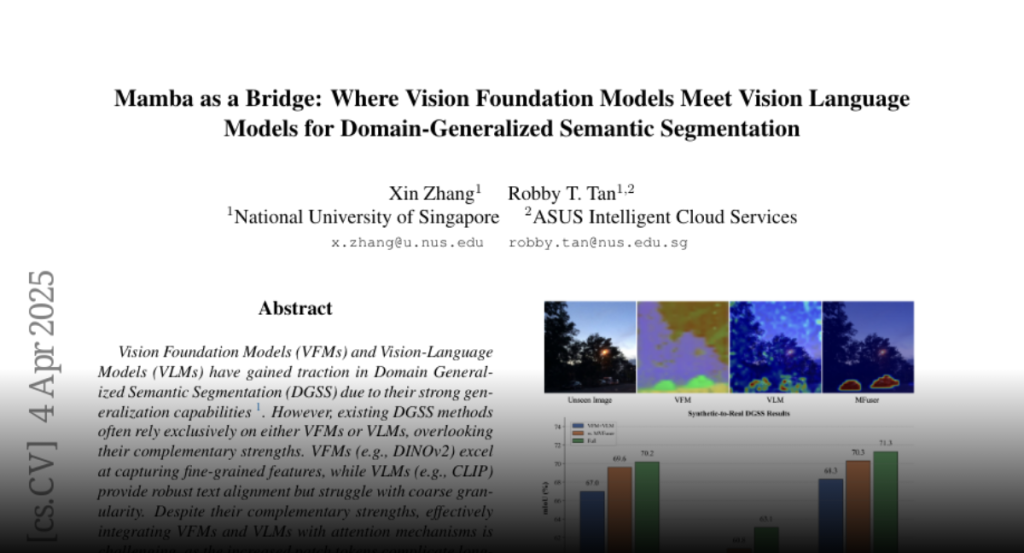

Vision Foundation Models (VFMs) and Vision-Language Models (VLMs) have gained

traction in Domain Generalized Semantic Segmentation (DGSS) due to their strong

generalization capabilities. However, existing DGSS methods often rely

exclusively on either VFMs or VLMs, overlooking their complementary strengths.

VFMs (e.g., DINOv2) excel at capturing fine-grained features, while VLMs (e.g.,

CLIP) provide robust text alignment but struggle with coarse granularity.

Despite their complementary strengths, effectively integrating VFMs and VLMs

with attention mechanisms is challenging, as the increased patch tokens

complicate long-sequence modeling. To address this, we propose MFuser, a novel

Mamba-based fusion framework that efficiently combines the strengths of VFMs

and VLMs while maintaining linear scalability in sequence length. MFuser

consists of two key components: MVFuser, which acts as a co-adapter to jointly

fine-tune the two models by capturing both sequential and spatial dynamics; and

MTEnhancer, a hybrid attention-Mamba module that refines text embeddings by

incorporating image priors. Our approach achieves precise feature locality and

strong text alignment without incurring significant computational overhead.

Extensive experiments demonstrate that MFuser significantly outperforms

state-of-the-art DGSS methods, achieving 68.20 mIoU on synthetic-to-real and

71.87 mIoU on real-to-real benchmarks. The code is available at

https://github.com/devinxzhang/MFuser.