Presented by HubSpot

INBOUND, HubSpot's annual conference for marketing and sales professionals, took place in San Francisco this year, with three days of insights and events across marketing, sales, CX, and AI innovation. It was a mix of the new, like the Creators Corner and the Tech Stack Showcase Stage, and the familiar, like HubSpot HQ, the Spotlight Product Demo Stage, HubSpot Academy Labs for hands-on product learning, and attendee favorite, Braindates.

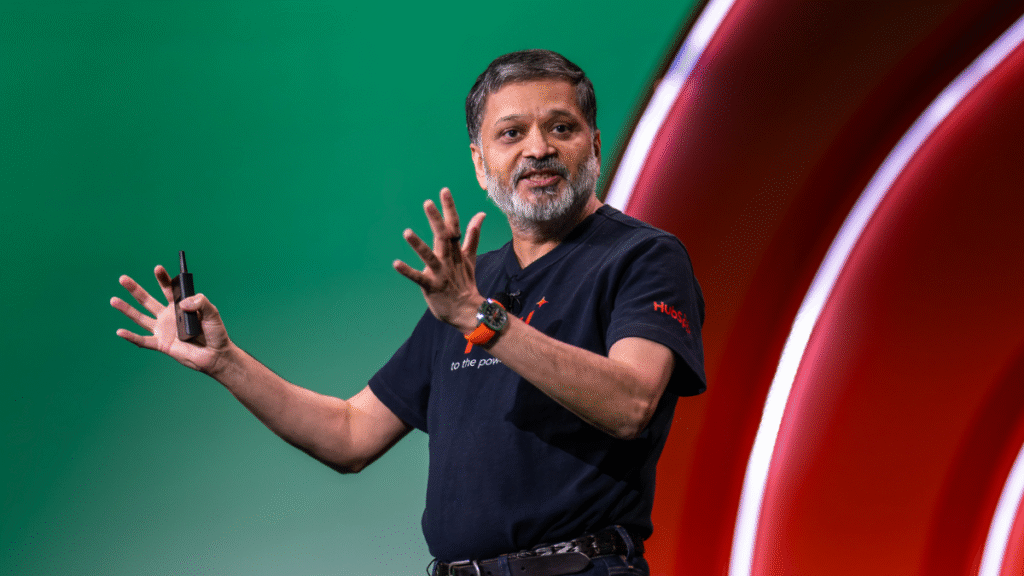

The opening HubSpot Spotlight on Wednesday, by HubSpot co-founder and CTO, Dharmesh Shah, dug into the nitty gritty of AI, what transformation really looks like, why generative AI is more than just glorified autocomplete, and why it's good a thing that it's not going anywhere, anytime soon.

"Is it an exponential opportunity? Or is it an existential threat? I asked AI this question. It thought deeply about it for a while and came up with its answer. Yes."

"Not everyone is necessarily sold on the merits of AI. It’s the most consequential, and also the most controversial, technology of our time," Shah said. "Is it an exponential opportunity? Or is it an existential threat? I asked AI this question. It thought deeply about it for a while and came up with its answer. Yes."

How do you compete with AI?

An informal poll of 6K respondents asked the question, "How do you compete with AI?” Shah said a third of the respondents read that as, how do I compete against it? But, he added, that frames it as a zero-sum game which isn't useful to anyone.

"We should think about it as a positive-sum collaboration," he explained. "The goal isn’t to battle the machine. The goal is to build with the machine."

This is critical because while AI capability is growing on an exponential curve, the learning curve for AI is much more linear, and so is the value seen from embracing the technology that's learned everything it knows from Shakespeare's full body of work, Reddit threads debating whether a hot dog is a sandwich, and Stephen Hawking's academic talks.

"At the end of it all, this machine is capable of predicting, with such accuracy, a really good next word, that what it’s doing is indistinguishable from thinking. That’s why it feels so magical," Shah said. "It’s autocomplete with a PhD in everything. It’s like having 1,000 PhDs in your pocket. Suddenly you can write poetry. You can write prose. You can write computer programs. It’s like Neo in the Matrix, except it’s not 'I know kung fu.' It’s 'I know everything.' You have the world’s knowledge sitting there in your pocket.”

There are some drawbacks. LLMs are limited to the training data they were given. Sometimes they hallucinate. They're frozen in time, and stateless — but they do learn. User interactions go into their long-term memories, including chats, resources they're asked to interact with, such as PDFs or images, and tools like the internet, databases, APIs with third-party systems and more.

How to better use AI in our daily lives

"My pro tip: every time you sit down at a computer to do something, try it first with AI. See if it can help, don’t overthink it."

So if AI is the real deal, and the transformation is important, how should you use AI?

"My pro tip: every time you sit down at a computer to do something, try it first with AI. See if it can help, don’t overthink it," Shah said. "You’ll be surprised. When it doesn’t work, don’t think, oh, well, this doesn’t work. Think, this doesn’t work yet. If it’s an important use case for you, leave yourself a calendar reminder to try it again in three months. Remember, AI is on an exponential curve. There’s a chance, three months or six months from now, that same thing that doesn’t work now will work."

The quality of the results you get is based on the quality of the model you use, the quality of the prompt you write, and the quality of the context you put in the context window, otherwise known as prompt engineering.

The quality of the model. There's been a Cambrian explosion in the world of large language models, but Shah recommends any of the three top-tier frontier models: OpenAI's GPT-5, Claude from Anthropic, or Google Gemini. But don't overthink it, he adds. Pick one you like or that your company is using.

The quality of the prompt. Humans use considerably less than 10% of AI's potential, Shah said. About 95% of the time, users repeat one of the handful of prompts they've found that have worked for the majority of their use cases. Instead, he recommends that 60% of the time, you use the prompts that work, carving out 30% of the time for iteration, or taking the prompts you're using right now and seeing if you can improve them for better results, spending 10% of your time on experimentation, or using AI for things that you’ve never tried before, and you're not sure will work. Metaprompting can be useful here — actually asking AI to make the prompt better

Beyond prompt engineering

Context engineering is just what it sounds like — adding the right context to a request so AI offers better results. Improving the quality of the context includes things like adding custom instructions, which every major LLM includes. That's basically telling the AI what perspective it's working from — the personality, how it should behave, how it should answer and so on. Once you provide custom instructions, it will use them for every answer, and your results improve.

The other improvement is a recent change — MCP, or model context protocol, a relatively new way to supply tools to the LLM. Any AI application that supports MCP can immediately and directly connect with the thousands of applications that also support MCP, like HubSpot, to bring that context directly into the LLM.

Taking action with AI agents

"Last year, at Inbound 24, I predicted that this year would be the year of AI agents. I was wrong," Shah said. "This is not the year of AI agents. This is the decade of AI agents. We are just getting started on this massive transformational wave in AI."

Last year Shah launched Agent.ai, a place to discover, use, and build your own AI agents. Over 2 million people are now using the platform. Around 26,000 users have already built agents on Agenti.ai, including Shah, who took all of the information from his keynote prep and put it into an AI agent anyone can chat with, at You.ai.

The TEAM strategy: triage, experiment, automate, and measure.

The next steps

How do you take your newfound AI enthusiasm and bring it to your team? Shah offered a gameplan, the TEAM strategy: triage, experiment, automate, and measure.

"The goal behind that strategy is to go from AI being led by individual heroics, where you have the super ambitious, super creative person, but translate those individual heroics into team habits," he explained. "Take those things and apply TEAM to go through that process again and again. We are big believers that tomorrow’s teams will be hybrid. This is a time to get started. Even in a small way, start building out your hybrid team."

But the most important thing to remember is that as smart as AI is, humans win on EQ, or emotional quotient. Marrying lived experience with an AI tool will improve your life, and improve your experiences, he added.

"But my challenge to you is, don’t stop there," he said. "The future does not belong to artificial intelligence. It belongs to you, with augmented intelligence. AI isn’t here to replace us. It’s here to replace the parts of our work that don’t bring us joy. To handle the repetitive so we can focus on the remarkable. The better AI gets, the more it allows us to be human."

Sponsored articles are content produced by a company that is either paying for the post or has a business relationship with VentureBeat, and they’re always clearly marked. For more information, contact sales@venturebeat.com.