In 2025, AI chatbots like Grok, ChatGPT, Claude, Perplexity, and Gemini have become indispensable tools for everything from drafting emails to solving complex problems. But as we pour our questions, prompts, and even personal files into these platforms, a critical question looms: what happens to our data? Specifically, how do these companies use our interactions to train their AI models, and what control do we have over it? Let’s dive into the privacy policies of these five major AI players to understand their approaches, based on the latest information available as of August 29, 2025. Note that policies evolve, so always check official sources for the most current details.

Also read: Anthropic on using Claude user data for training AI: Privacy policy explained

Grok (xAI): Balancing openness with control

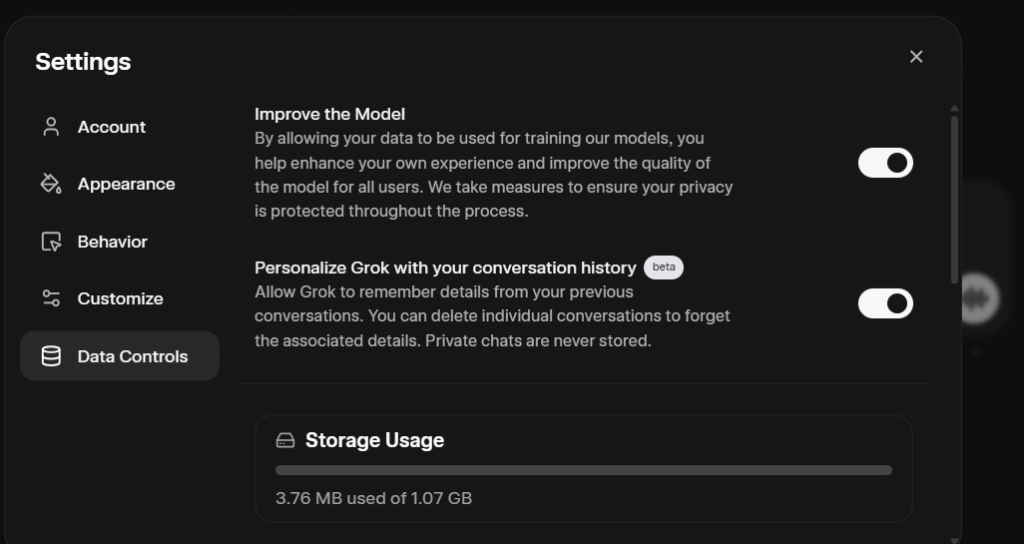

Grok, created by xAI, is designed to be a truth-seeking assistant with a quirky edge, often pulling real-time insights from X (formerly Twitter). Its privacy policy reveals that xAI may use your prompts, responses, and interactions to improve its models’ language understanding, accuracy, and safety. If you’re using Grok through X, your posts and interactions could also be tapped for training unless you opt out. The good news? xAI emphasizes user control. You can disable data usage for training via Grok’s settings on the mobile app or grok.com (under Settings > Data Controls) or by emailing privacy@x.ai. For X users, there’s a separate toggle in X’s settings under Privacy & Safety > Data Sharing and Personalization > Grok. A standout feature is Grok’s Private Chat mode, which ensures your data isn’t used for training at all. Deleted conversations are typically removed within 30 days, unless retained for safety or legal reasons. For businesses, enterprise API agreements offer separate terms, keeping customer data distinct from training processes. xAI also notes it avoids actively seeking sensitive data, aiming for a privacy-conscious approach.

ChatGPT (OpenAI): Opt-out simplicity

ChatGPT, OpenAI’s wildly popular chatbot, powers everything from creative writing to coding. Its privacy policy is clear: your prompts, files, images, and audio may be used to enhance services, including model training. However, OpenAI offers a straightforward opt-out process, detailed at their help center. All you have to do is go to their privacy portal and click on “do not train on my content.” Once you opt out, your future data won’t be used for training, though there’s no promise about retroactive data. For business users, like those on enterprise plans or using the API, separate customer agreements apply, often with stricter protections. OpenAI doesn’t explicitly commit to avoiding data use without opt-out, so proactive users should toggle this setting if privacy is a concern. The policy’s simplicity makes it accessible, but it puts the onus on users to take action.

Claude (Anthropic): A shift to opt-in

Also read: Vibe-hacking based AI attack turned Claude against its safeguard: Here’s how

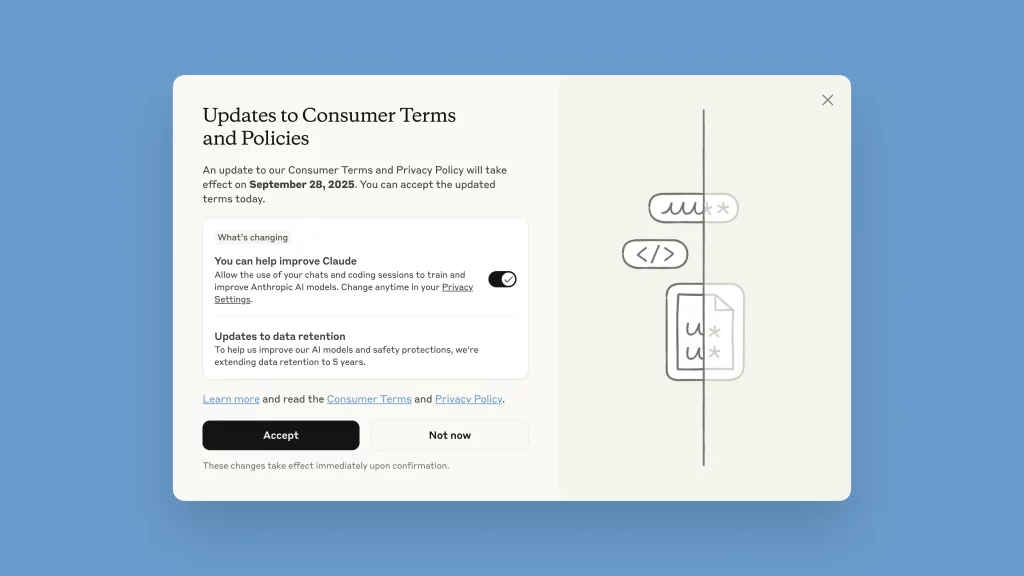

Claude, built by Anthropic, has long been praised for its safety-first approach, but a recent policy update (effective September 2025) has stirred discussion. Previously, Claude didn’t use consumer conversations for training by default. Now, it requires users to opt-in to allow their prompts, responses, and coding sessions to train future models, with the setting enabled by default. You can disable this via a settings toggle after a popup prompt, but once data is used, it can’t be retroactively withdrawn. Anthropic emphasizes filtering out sensitive information and encrypting data, with retention up to five years for opted-in users (compared to 30 days previously). If you don’t accept the new terms by September 28, 2025, you’ll lose access to Claude. Enterprise users, such as those on Claude for Work or API integrations, are exempt, with data governed by separate agreements. This shift has raised eyebrows, as the opt-in default feels less privacy-friendly than before, but Anthropic insists it’s necessary for model improvement.

Perplexity (Perplexity AI): Research-focused with opt-out

Perplexity, the research-oriented AI, blends chatbot functionality with real-time web search, offering citations for its answers. Its policy states that interactions – questions, prompts, and outputs – may be used to improve services, including AI models. However, Perplexity excludes email data (like from Gmail integrations) from training. Users can opt out through the settings page if logged in or request account deletion by emailing support@perplexity.ai, with deletion completed within 30 days. For enterprise users, such as those on API or Enterprise Pro plans, Perplexity acts as a data processor under separate terms, often with stricter controls outlined in a Data Processing Addendum. Perplexity’s focus on transparency aligns with its research mission, but its default data use means privacy-conscious users should act quickly to adjust settings.

Gemini (Google): Granular but complex

Google’s Gemini, a multimodal powerhouse, integrates seamlessly with Google’s ecosystem. Its privacy policy is detailed but complex, reflecting Google’s broader data practices. Chats, recordings, files, images, feedback, and related data may be used to improve machine-learning technologies, and human reviewers might process this data. Gemini offers multiple privacy controls: you can turn off Gemini Apps Activity at myactivity.google.com/product/gemini, with a separate setting for audio or Gemini Live recordings. Temporary chats aren’t used for training unless you submit feedback, and data from connected apps (like Google Docs) is protected from use or review. Google coarsens location data for privacy and retains chats for only 72 hours if activity tracking is off. For work or school accounts, different terms apply via the Generative AI in Google Workspace Privacy Hub. While Gemini’s controls are robust, navigating them requires familiarity with Google’s account settings, which can feel overwhelming.

Why it matters and what you can do

Each of these AI platforms uses user data to fuel innovation, but their approaches vary. Grok and ChatGPT offer clear opt-out paths, while Claude’s new opt-in default has sparked debate. Perplexity balances research needs with user control, and Gemini’s granular settings cater to Google’s ecosystem but demand more user effort. For businesses, enterprise plans often provide stronger protections, isolating data from training pipelines.

If privacy is a priority, take these steps: check each platform’s settings to disable data use for training, use private or temporary chat modes where available (like Grok’s Private Chat), and limit sensitive inputs. Enterprise users should review specific agreements. Policies can change, so regularly visit official sites – x.ai for Grok, openai.com for ChatGPT, anthropic.com for Claude, perplexity.ai for Perplexity, and gemini.google.com for Gemini – to stay informed.

As AI becomes a daily companion, understanding how your data shapes these models empowers you to make informed choices. Whether you’re a casual user or a business, there’s a balance to strike between leveraging AI’s power and protecting your privacy.

Also read: ChatGPT to Gemini: How much energy do your AI queries cost?