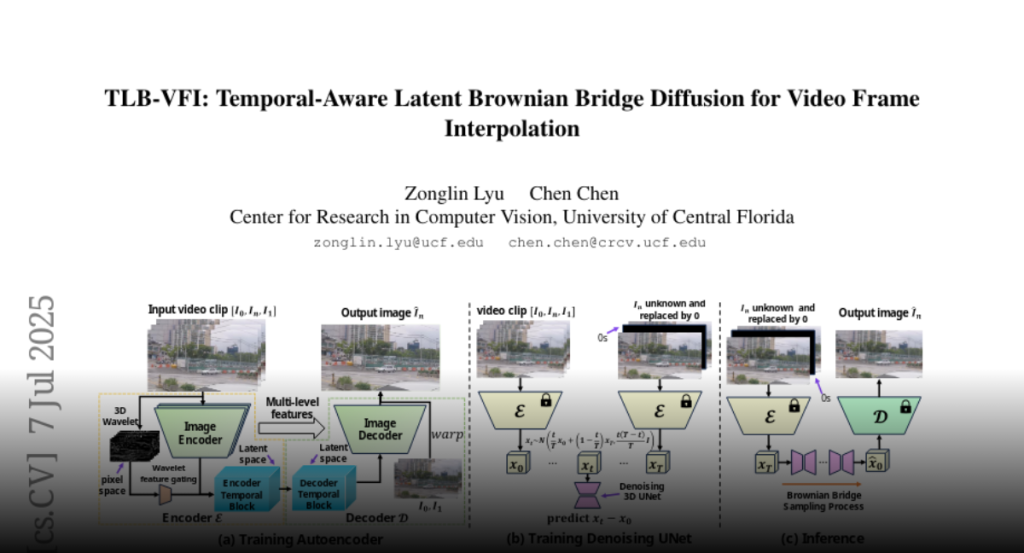

Temporal-Aware Latent Brownian Bridge Diffusion for Video Frame Interpolation (TLB-VFI) improves video frame interpolation by efficiently extracting temporal information, reducing parameters, and requiring less training data compared to existing methods.

Video Frame Interpolation (VFI) aims to predict the intermediate frame I_n

(we use n to denote time in videos to avoid notation overload with the timestep

t in diffusion models) based on two consecutive neighboring frames I_0 and

I_1. Recent approaches apply diffusion models (both image-based and

video-based) in this task and achieve strong performance. However, image-based

diffusion models are unable to extract temporal information and are relatively

inefficient compared to non-diffusion methods. Video-based diffusion models can

extract temporal information, but they are too large in terms of training

scale, model size, and inference time. To mitigate the above issues, we propose

Temporal-Aware Latent Brownian Bridge Diffusion for Video Frame Interpolation

(TLB-VFI), an efficient video-based diffusion model. By extracting rich

temporal information from video inputs through our proposed 3D-wavelet gating

and temporal-aware autoencoder, our method achieves 20% improvement in FID on

the most challenging datasets over recent SOTA of image-based diffusion models.

Meanwhile, due to the existence of rich temporal information, our method

achieves strong performance while having 3times fewer parameters. Such a

parameter reduction results in 2.3x speed up. By incorporating optical flow

guidance, our method requires 9000x less training data and achieves over 20x

fewer parameters than video-based diffusion models. Codes and results are

available at our project page: https://zonglinl.github.io/tlbvfi_page.