The study identifies and analyzes OCR Heads within Large Vision Language Models, revealing their unique activation patterns and roles in interpreting text within images.

Despite significant advancements in Large Vision Language Models (LVLMs), a

gap remains, particularly regarding their interpretability and how they locate

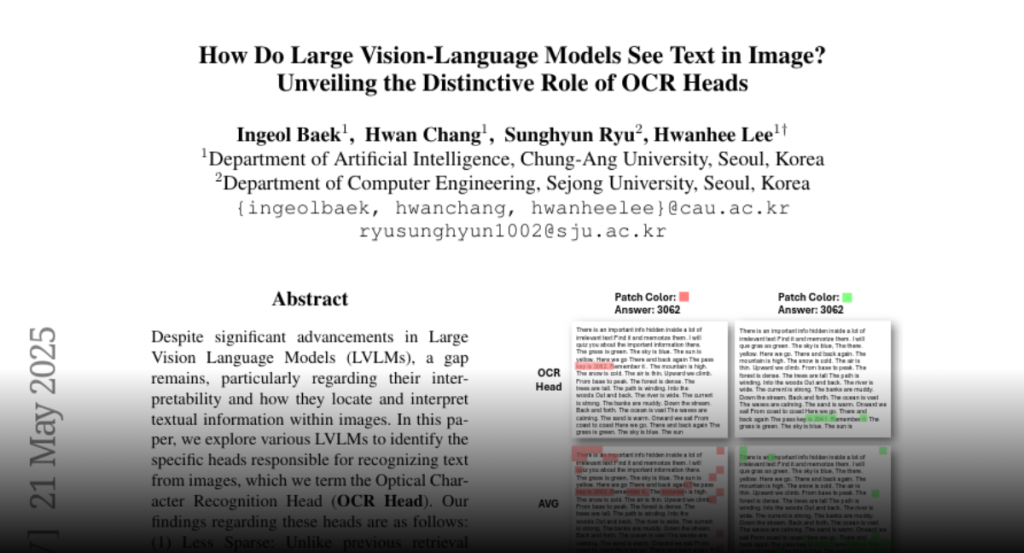

and interpret textual information within images. In this paper, we explore

various LVLMs to identify the specific heads responsible for recognizing text

from images, which we term the Optical Character Recognition Head (OCR Head).

Our findings regarding these heads are as follows: (1) Less Sparse: Unlike

previous retrieval heads, a large number of heads are activated to extract

textual information from images. (2) Qualitatively Distinct: OCR heads possess

properties that differ significantly from general retrieval heads, exhibiting

low similarity in their characteristics. (3) Statically Activated: The

frequency of activation for these heads closely aligns with their OCR scores.

We validate our findings in downstream tasks by applying Chain-of-Thought (CoT)

to both OCR and conventional retrieval heads and by masking these heads. We

also demonstrate that redistributing sink-token values within the OCR heads

improves performance. These insights provide a deeper understanding of the

internal mechanisms LVLMs employ in processing embedded textual information in

images.