OpenAI is moving to publish the results of its internal AI model safety evaluations more regularly in what the outfit is pitching as an effort to increase transparency.

On Wednesday, OpenAI launched the Safety Evaluations Hub, a webpage showing how the company’s models score on various tests for harmful content generation, jailbreaks, and hallucinations. OpenAI says that it’ll use the hub to share metrics on an “ongoing basis,” and that it intends to update the hub with “major model updates” going forward.

“As the science of AI evaluation evolves, we aim to share our progress on developing more scalable ways to measure model capability and safety,” wrote OpenAI in a blog post. “By sharing a subset of our safety evaluation results here, we hope this will not only make it easier to understand the safety performance of OpenAI systems over time, but also support community efforts to increase transparency across the field.”

OpenAI says that it may add additional evaluations to the hub over time.

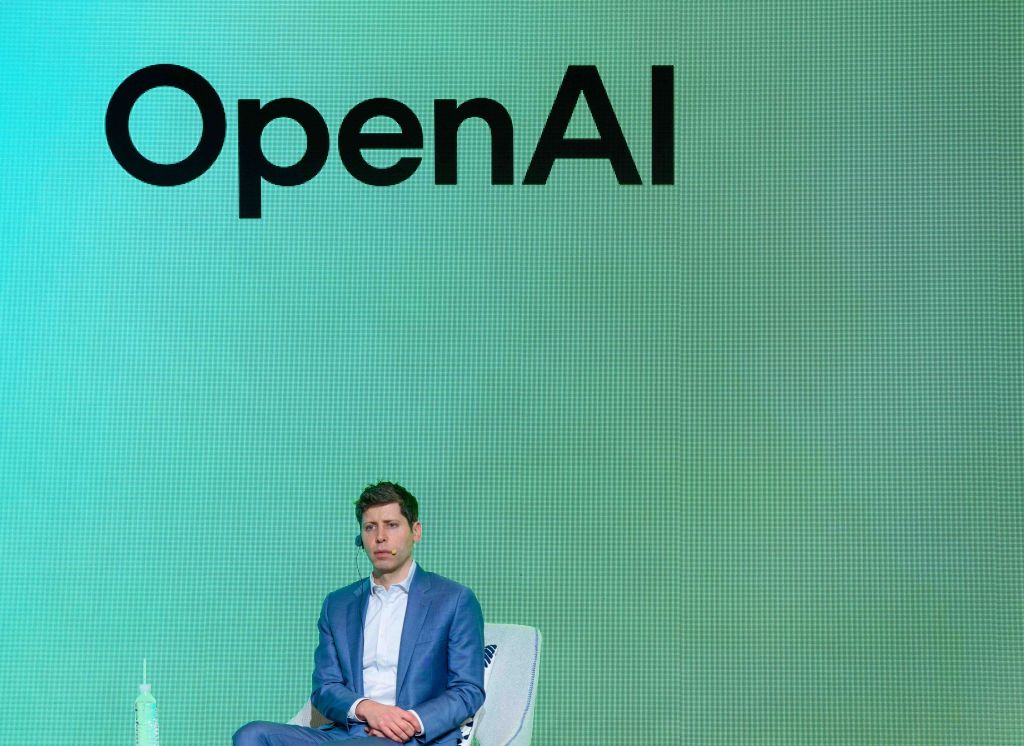

In recent months, OpenAI has raised the ire of some ethicists for reportedly rushing the safety testing of certain flagship models and failing to release technical reports for others. The company’s CEO, Sam Altman, also stands accused of misleading OpenAI executives about model safety reviews prior to his brief ouster in November 2023.

Late last month, OpenAI was forced to roll back an update to the default model powering ChatGPT, GPT-4o, after users began reporting that it responded in an overly validating and agreeable way. X became flooded with screenshots of ChatGPT applauding all sorts of problematic, dangerous decisions and ideas.

OpenAI said that it would implement several fixes and changes to prevent future such incidents, including introducing an opt-in “alpha phase” for some models that would allow certain ChatGPT users to test the models and give feedback before launch.