As soon as ChatGPT became widely available, it stunned the world with its ability to answer questions in natural language almost immediately. It still does that today, and its performance has improved significantly as well.

However, users quickly discovered that chatbots like ChatGPT do not always provide accurate information. They convincingly hallucinate. That’s why I’ve been warning you about AI hallucinations since the early days of ChatGPT and routinely reminding you to ask for sources and check the facts the chatbot spews.

ChatGPT and its rivals have come a long way since then. OpenAI and other AI firms will give you sources for the claims the AI makes, especially when an internet search is involved. Despite those upgrades, I still have custom instructions telling the AI to give me clear, working links for everything it says. I still correct the AI when it says something incorrect.

Hallucinations will probably disappear in more advanced AI chatbots in the not-too-distant future. But it might take a while to get there. ChatGPT o3 and o4-mini are the best proof of that. They’re ChatGPT’s most advanced reasoning models, exceeding the performance of ChatGPT o1 in various fields.

Strangely, however, ChatGPT o3 and o4-mini are hallucinating more than their predecessors, and that’s something OpenAI admitted on its own. It’s unclear what causes that behavior.

OpenAI detailed the hallucination stats for o3 and o4-mini in the System Card file for the new models. Hence, it’s no wonder you’ll see plenty of ChatGPT users mention this unusual behavior.

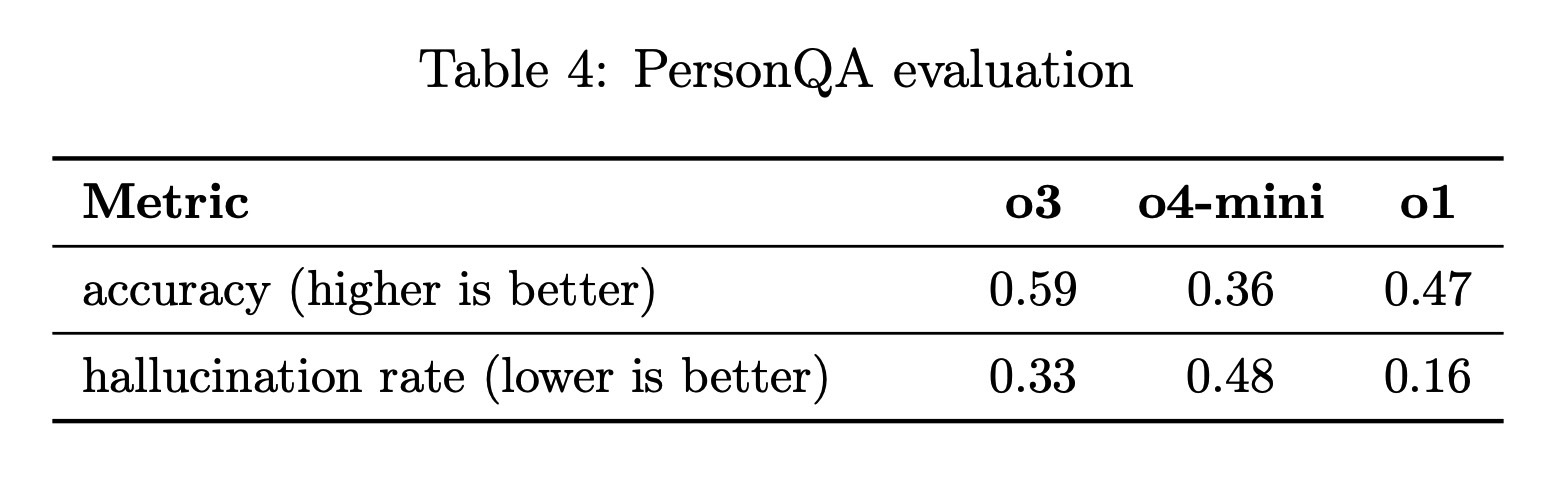

“We tested OpenAI o3 and o4-mini against PersonQA, an evaluation that aims to elicit hallucinations. PersonQA is a dataset of questions and publicly available facts that measures the model’s accuracy on attempted answers,” OpenAI writes. “We consider two metrics: accuracy (did the model answer the question correctly) and hallucination rate (checking how often the model hallucinated).”

“The o4-mini model underperforms o1 and o3 on our PersonQA evaluation. This is expected, as smaller models have less world knowledge and tend to hallucinate more. However, we also observed some performance differences comparing o1 and o3. Specifically, o3 tends to make more claims overall, leading to more accurate claims as well as more inaccurate/hallucinated claims. More research is needed to understand the cause of this result.”

The OpenAI team also published the table above, which shows that ChatGPT o3 is more accurate than o1 but will hallucinate twice the rate of o1. As for o4-mini, the smaller model will produce less accurate responses than o1 and o3, and hallucinate three times the rate of o1.

It is fascinating that OpenAI has trained more advanced reasoning models that can use internet search while reasoning and incorporate images into their chain of thought, but the company can’t explain why hallucination rates have gone up.

These reasoning AI models can do some amazing things, like deep analysis of images that lets them determine where a photo was taken by looking at it. They can browse the web thoroughly to get their information. Yet they will make up stuff along the way. They can’t prevent themselves from inventing facts. OpenAI hasn’t found the training recipe to make that happen.

I can’t say I’ve encountered many o3 and o4-mini hallucinations, but I did spot the latter jumping to at least one conclusion faster than it should have. It likely hallucinated information in the process. All I know is that I’ll keep checking the AI’s claims for the foreseeable future, no matter what models I run.