Charts are ubiquitous, as people often use them to analyze data, answer

questions, and discover critical insights. However, performing complex

analytical tasks with charts requires significant perceptual and cognitive

effort. Chart Question Answering (CQA) systems automate this process by

enabling models to interpret and reason with visual representations of data.

However, existing benchmarks like ChartQA lack real-world diversity and have

recently shown performance saturation with modern large vision-language models

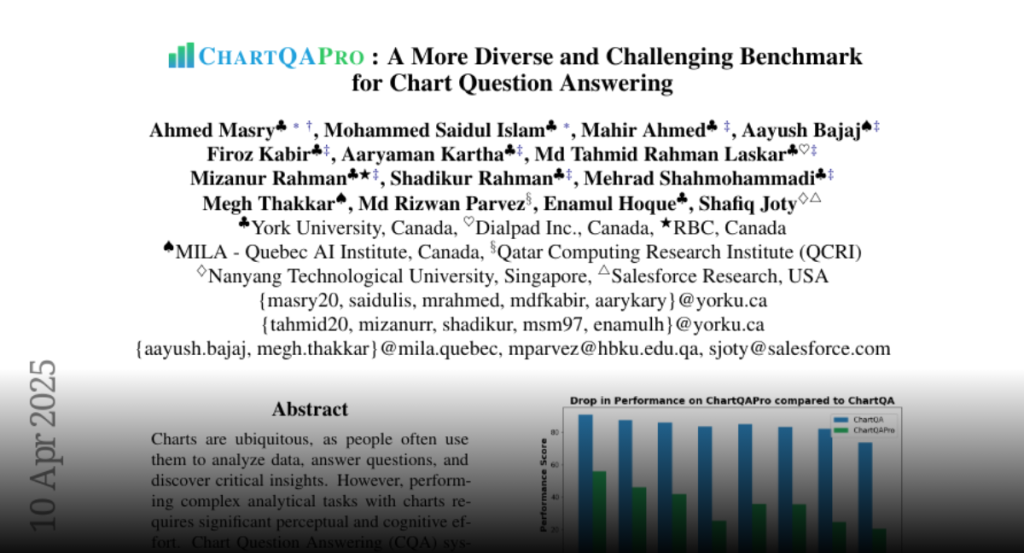

(LVLMs). To address these limitations, we introduce ChartQAPro, a new benchmark

that includes 1,341 charts from 157 diverse sources, spanning various chart

types, including infographics and dashboards, and featuring 1,948 questions in

various types, such as multiple-choice, conversational, hypothetical, and

unanswerable questions, to better reflect real-world challenges. Our

evaluations with 21 models show a substantial performance drop for LVLMs on

ChartQAPro; e.g., Claude Sonnet 3.5 scores 90.5% on ChartQA but only 55.81% on

ChartQAPro, underscoring the complexity of chart reasoning. We complement our

findings with detailed error analyses and ablation studies, identifying key

challenges and opportunities for advancing LVLMs in chart understanding and

reasoning. We release ChartQAPro at https://github.com/vis-nlp/ChartQAPro.